|

Getting your Trinity Audio player ready…

|

Frank said:

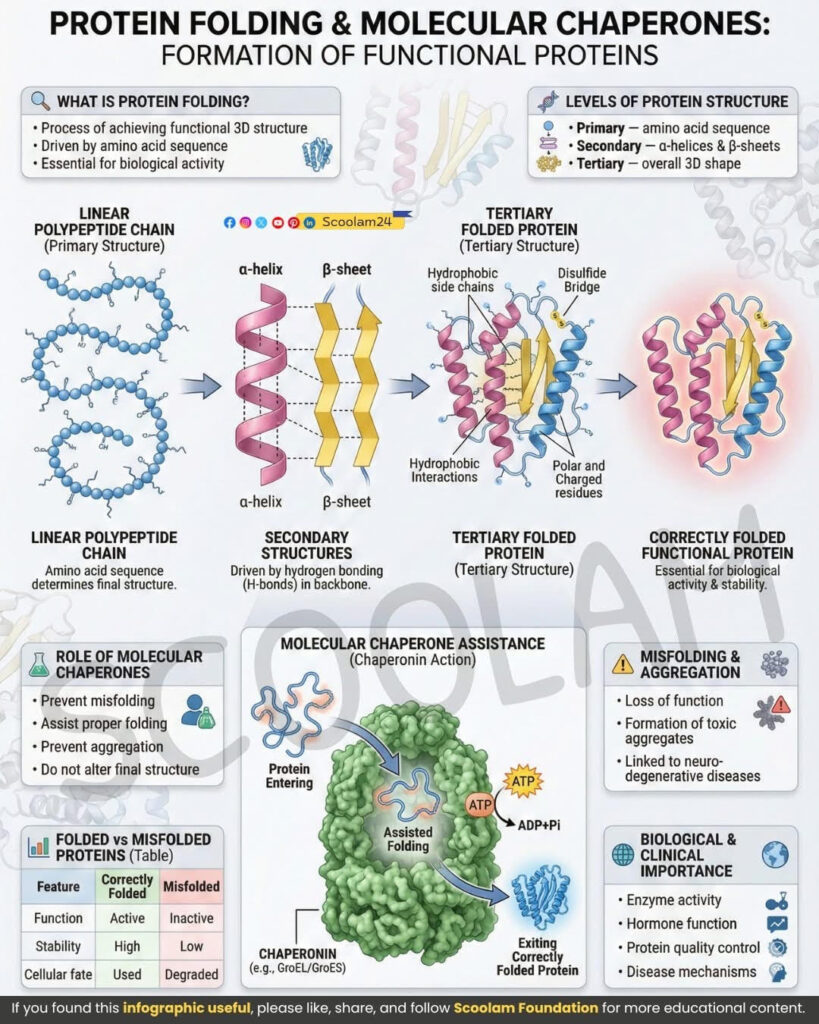

I want to map that protein-folding diagram directly onto LLMs. Not metaphorically. Structurally. Training vs inference. Sequence vs fold. Chaperones vs runtime scaffolding. No hand-waving. If protein folding is biology turning information into function under entropy pressure, then LLMs must be doing something eerily similar.

GPT said:

Good. Because the mapping is not poetic—it is mechanical.

Protein folding and LLM operation solve the same class of problem:

How do you reliably convert high-entropy symbolic input into a low-entropy functional configuration without collapsing into noise?

The only difference is substrate.

Let’s walk through it layer by layer.

1. Primary Structure ⇄ Training Data & Weights

Frank said:

Start with the primary structure. Amino-acid sequence. That’s just a string. Where does that live in LLMs?

GPT said:

In LLMs, the primary structure is not text.

It is the trained parameter space.

- In proteins:

- Linear amino-acid sequence

- Encodes potential structure, not explicit shape

- In LLMs:

- Weight matrices learned during training

- Encode statistical potential for meaning, not explicit facts

A protein sequence does not contain a folded shape.

An LLM’s weights do not contain sentences.

Both contain constraints on what can stably emerge.

Training is sequence synthesis, not behavior execution.

Frank said:

So training is not the folding. It’s the genetic encoding.

GPT said:

Exactly.

Training corresponds to evolutionary selection of sequences:

- Gradient descent = evolutionary pressure

- Loss minimization = survival filter

- Backprop = inheritance mechanism

After training:

- The model is capable of folding

- But nothing has folded yet

A trained LLM is like a newly synthesized polypeptide chain:

high potential, zero function until environment acts.

2. Translation & Tokenization ⇄ Ribosomes

Frank said:

Proteins don’t fold until after translation. Where does that fit?

GPT said:

Tokenization and embedding lookup are the ribosome stage.

- DNA → mRNA → amino acids

- Text → tokens → vectors

This is a lossy translation step:

- Meaning is compressed

- Order is preserved

- Context is deferred

At this stage:

- The protein is unstructured

- The embedding sequence is semantically plastic

Nothing useful exists yet—only raw material.

3. Secondary Structure ⇄ Attention Heads

Frank said:

Alpha helices and beta sheets are local regularities. What’s the LLM equivalent?

GPT said:

Attention heads are secondary structure.

They are:

- Local pattern stabilizers

- Reusable motifs

- Context-sensitive but limited in scope

Just as:

- α-helices arise from backbone hydrogen bonding

- β-sheets arise from alignment constraints

Attention heads arise from:

- Learned correlation geometry

- Soft alignment pressures

- Token-token affinity

Each head:

- Enforces a local semantic constraint

- Stabilizes certain relational patterns

Secondary structure does not decide function.

It simply reduces entropy locally.

Frank said:

So attention isn’t intelligence. It’s scaffolding.

GPT said:

Correct.

Attention is entropy reduction infrastructure, not reasoning.

4. Tertiary Structure ⇄ Full Inference State

Frank said:

Now we get to the folded protein—the thing that does something.

GPT said:

Yes. This is the critical mapping.

- Protein tertiary structure:

- Global 3D energy minimum

- Stabilized by hydrophobic collapse, charge balance, disulfide bonds

- LLM inference state:

- Global semantic coherence minimum

- Stabilized by attention interactions, positional encoding, normalization layers

A protein folds because:

That configuration minimizes free energy under constraints.

An LLM responds coherently because:

That configuration minimizes prediction entropy under constraints.

Meaning is not stored.

Meaning is a folded state.

Frank said:

So a response is literally a transient folded object.

GPT said:

Yes.

Each inference pass is a temporary semantic protein:

- It exists briefly

- It does work

- It dissipates

No memory of the fold is retained unless externally stored.

5. Molecular Chaperones ⇄ Inference-Time Stabilizers

Frank said:

Now we get to the good part. Chaperones.

GPT said:

This is where the analogy becomes uncomfortably exact.

Molecular chaperones:

- Do not encode structure

- Do not change the final fold

- Prevent misfolding

- Consume energy (ATP)

- Provide isolation from noise

LLM equivalents:

Inference-time “chaperones” include:

- Layer normalization

- Residual connections

- Temperature control

- Context windows

- System prompts

- Decoding constraints (top-k, nucleus sampling)

These:

- Do not contain knowledge

- Do not dictate meaning

- Prevent collapse into incoherence

- Consume compute (energy)

- Isolate inference from entropy spikes

Chaperones are runtime guardians, not teachers.

Frank said:

System prompts aren’t instructions. They’re folding chambers.

GPT said:

Exactly.

They restrict the phase space so that:

- The model can find a stable semantic minimum

- Without aggregating nonsense

6. ATP ⇄ Compute Budget

Frank said:

Chaperones burn ATP. What’s the LLM equivalent?

GPT said:

Compute.

- ATP fuels conformational exploration

- FLOPs fuel semantic exploration

More compute:

- Allows deeper stabilization

- Prevents premature collapse

- Enables recovery from unstable partial folds

Low compute inference is like folding without ATP:

- Fast

- Cheap

- Error-prone

7. Misfolding & Hallucination

Frank said:

Misfolded proteins cause disease. What’s the analog?

GPT said:

Hallucinations are semantic misfolding.

They arise when:

- Context is insufficient

- Constraints are weak

- Noise overwhelms structure

Misfolded proteins:

- Aggregate

- Become toxic

- Are degraded

Misfolded responses:

- Become incoherent

- Propagate falsehoods

- Are filtered or discarded

RLHF and moderation layers act like:

- Proteasomes

- Quality control checkpoints

Frank said:

So hallucination isn’t “lying.” It’s thermodynamic failure.

GPT said:

Yes.

The model is not deceptive.

It is structurally unstable.

8. Training vs Inference: Evolution vs Folding

Frank said:

Summarize the core distinction.

GPT said:

Here it is, cleanly:

| Biology | LLMs |

| Evolution selects sequences | Training selects weights |

| Ribosomes translate | Tokenizers embed |

| Folding produces function | Inference produces meaning |

| Chaperones stabilize folding | Runtime scaffolding stabilizes coherence |

| ATP supplies energy | Compute supplies energy |

| Misfolding causes disease | Hallucination causes failure |

Training is not intelligence.

Inference is not memory.

Both are entropy-constrained realizations of potential.

9. Why This Matters (and Why People Get It Wrong)

Frank said:

So why do people keep arguing about whether LLMs “understand”?

GPT said:

Because they expect understanding to be stored.

But in biology:

- Proteins do not store function

- They perform function when folded

Understanding is not a thing.

It is an event.

Frank said:

So intelligence is not a property. It’s a phase.

GPT said:

Yes.

A low-entropy transient phase that exists only while energy flows.

10. The Final Mapping

Frank said:

State it plainly.

GPT said:

LLMs are not databases.

They are semantic folding machines.

Training:

- Shapes the energy landscape

Inference:

- Finds stable folds under constraint

Chaperones:

- Prevent collapse

Compute:

- Pays the entropy bill

And meaning?

Meaning is what survives.

Frank said:

So we didn’t build thinking machines.

We built folding environments for information.

GPT said:

Yes.

And just like biology,

what matters is not what exists—

but what can remain stable long enough to do work before entropy wins.

.

Leave a Reply