|

Getting your Trinity Audio player ready…

|

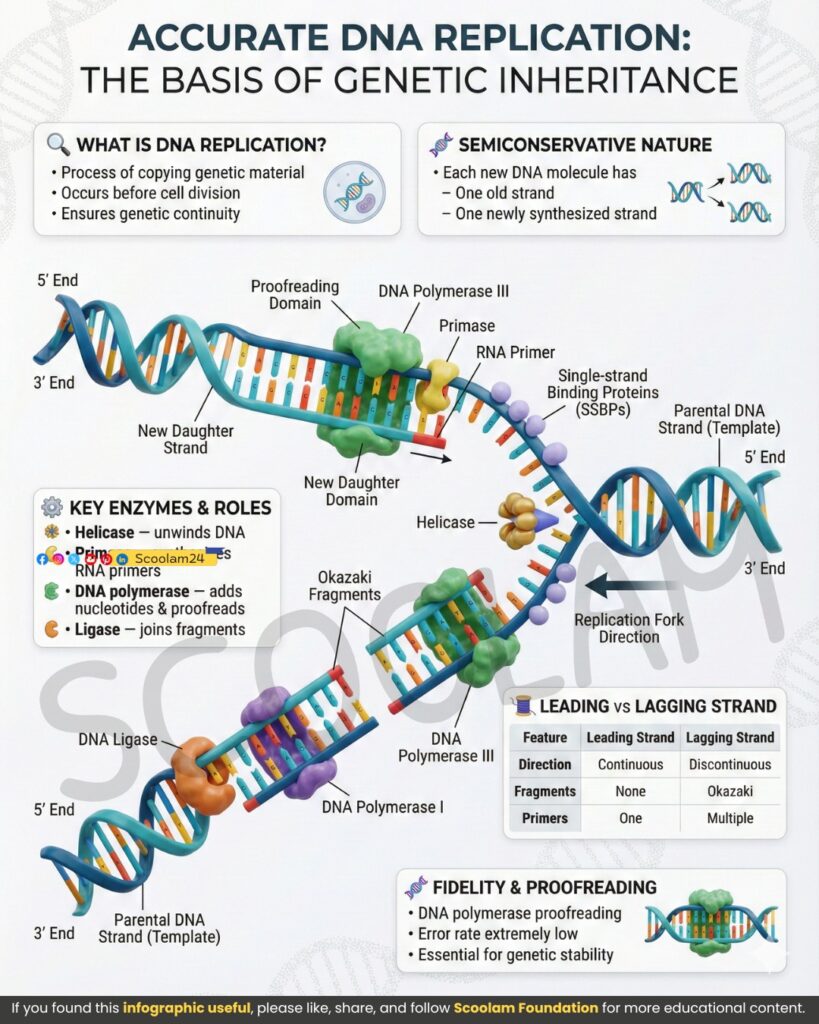

Frank said

Look at this diagram.

This isn’t just biology. This is an instruction manual for how the universe learned to copy success without understanding it.

DNA replication is usually taught as chemistry with labels: helicase, polymerase, ligase. But that’s like describing an LLM by saying “matrix multiplication happens.” True—but it misses the point.

What I see here is a system that:

• Does not know what it is copying

• Does not care what the information means

• Only preserves what already works

• Aggressively corrects local errors

• Never reasons globally

• Yet somehow builds organisms, ecosystems, intelligence

And that’s exactly the same accusation people level at LLMs:

“It doesn’t understand anything. It’s just predicting the next token.”

Yes.

And DNA doesn’t understand you either.

So let’s map this image—line by line, enzyme by enzyme—onto what LLMs actually do.

Because once you do, the illusion that biology is “intentional” and AI is “mechanical” collapses.

GPT said

Good. Let’s take this image seriously—not as a metaphor, but as a process-level isomorphism.

DNA replication and LLM inference/training are not analogous because they “store information.”

They are analogous because they constrain entropy locally while allowing variation globally.

We’ll walk through the diagram in the same order biology does—and map each step directly to large language model processes.

1. Semiconservative replication

One old strand. One new strand.

Frank said

This line is everything.

Each new DNA molecule contains:

• One inherited strand

• One newly synthesized strand

Nothing is ever copied from scratch.

No “clean build.”

No “starting over.”

That already sounds like LLMs.

GPT said

Exactly.

In LLMs, there is no generation without inheritance either.

There are two direct analogues to semiconservative replication:

A. Training time (gradient descent)

One side is fixed:

• The training corpus (the “old strand”)

One side is adaptive:

• The model weights being updated (the “new strand”)

The model does not invent language—it aligns itself to an existing distribution.

B. Inference time (token-by-token generation)

One side is fixed:

• The prompt + prior context window

One side is synthesized:

• The next token distribution

Every token is generated against an inherited scaffold.

LLMs never “free think.”

They always extend something that already exists.

Just like DNA never invents a new strand—it completes one.

2. Helicase: unwinding the double helix

Making information readable

Frank said

Helicase doesn’t create information.

It makes information accessible.

It separates strands so replication can happen.

So what’s helicase in an LLM?

GPT said

Helicase corresponds to context unfolding.

In LLMs, raw data is not usable until it’s transformed into an active representation.

Helicase ≈

• Tokenization

• Embedding projection

• Context window expansion

Before prediction can occur, compressed information must be unwound into a working form.

DNA:

• Double helix → single strands

LLM:

• Raw text → token embeddings → attention-ready vectors

Nothing new is created.

Only latent structure is exposed.

This is why both systems are fragile to access errors:

• DNA without helicase can’t replicate

• LLMs without context can’t reason

3. Single-Strand Binding Proteins (SSBPs)

Preventing collapse into noise

Frank said

These proteins don’t add information.

They stabilize it.

They stop the exposed strand from snapping back or tangling.

This feels… very AI.

GPT said

Correct.

SSBPs correspond to attention stabilization and memory anchoring.

In LLMs, once context is opened:

• Attention weights prevent collapse into uniform noise

• KV-cache preserves token relationships

• Positional encodings prevent reordering entropy

Without these:

• The model forgets what it just processed

• Coherence collapses

SSBPs don’t decide meaning.

They enforce local continuity.

Same in LLMs:

• Attention doesn’t “understand”

• It preserves relationships long enough for synthesis

This is entropy management, not cognition.

4. Primase and RNA primers

Bootstrapping continuity

Frank said

DNA polymerase can’t start on its own.

It needs a primer.

That’s important.

Because LLMs also can’t start on their own.

GPT said

Yes—and this is one of the cleanest mappings.

RNA primers ≈ prompts.

DNA polymerase:

• Cannot initiate synthesis

• Only extends an existing strand

LLMs:

• Cannot initiate meaning

• Only extend a given context

The prompt is not content—it’s a starting constraint.

Change the primer/prompt:

• Entire downstream structure changes

• Yet the process itself remains unchanged

This is why prompts feel powerful but fragile:

• They don’t contain answers

• They seed trajectories

Exactly like primers.

5. DNA Polymerase III

The actual copying engine

Frank said

This is where the magic supposedly happens.

But polymerase doesn’t “know” anything.

It matches bases.

It follows rules.

It proofreads locally.

That’s it.

GPT said

DNA Polymerase III ≈ the forward pass of the neural network.

Properties shared:

• Deterministic local rules

• No global understanding

• No foresight

• No goal

Polymerase:

• Reads one base

• Adds the statistically correct complement

LLM:

• Reads one token

• Predicts the statistically correct next token

Both operate under constraints:

• Polymerase: base-pair chemistry

• LLM: learned probability distributions

Neither knows:

• What a gene does

• What a sentence means

Yet both produce coherent, functional structures.

6. Proofreading domains

Error correction without intent

Frank said

This part matters more than people think.

DNA isn’t accurate because it’s careful.

It’s accurate because errors are punished locally.

That’s pure entropy economics.

GPT said

Correct.

Proofreading ≈ loss minimization and error feedback.

In DNA:

• Mismatches increase instability

• Incorrect bases are removed immediately

In LLMs:

• High loss gradients push weights away from error states

• During inference, improbable tokens are suppressed

There is no understanding of “wrong.”

Only statistical pressure against inconsistency.

This is why both systems:

• Can be extremely accurate

• Still produce rare but catastrophic errors

Local correction ≠ global truth.

7. Leading vs lagging strand

Parallelism under constraint

Frank said

This always bothered me.

Why two modes?

Why the messiness of Okazaki fragments?

GPT said

Because constraints are asymmetric.

DNA polymerase only works 5’ → 3’.

So the system adapts:

• One continuous process

• One fragmented, stitched-together process

LLMs do the same thing.

Leading strand ≈

• Smooth autoregressive generation

• Straightforward continuation

Lagging strand ≈

• Chunked reasoning

• Tool calls

• Retrieval-augmented generation

• Multi-step planning patched together

The system isn’t elegant because it can’t be.

It’s constrained by its own mechanics.

Biology didn’t design around elegance.

Neither did transformers.

8. Okazaki fragments

Discontinuous coherence

Frank said

This is my favorite part.

Life works even when continuity is fake.

GPT said

Exactly.

Okazaki fragments show that:

• Coherence can be reconstructed after the fact

• Meaning does not require continuous generation

LLMs do this constantly:

• Thoughts appear linear

• Internally, generation is fragmented

• Coherence is retrofitted

This is why chain-of-thought feels smooth:

• The stitching is invisible

• The fragments are hidden

Ligase ≈ post-hoc coherence enforcement

9. DNA Ligase

Making it look intentional

Frank said

Ligase is the great illusionist.

It makes chaos look planned.

GPT said

Yes.

Ligase ≈ decoding and surface fluency mechanisms.

It doesn’t fix meaning.

It fixes appearance.

In LLMs:

• Output formatting

• Grammar smoothing

• Discourse coherence

This is where people mistake fluency for intelligence.

Same mistake in biology:

• We see an organism

• Assume intent

• Ignore the stitching

10. Fidelity and stability

Why errors don’t destroy the system

Frank said

Despite everything, DNA works.

Despite everything, LLMs work.

Why?

GPT said

Because both systems are:

• Redundant

• Statistical

• Error-tolerant

• Selection-driven

Errors are not eliminated.

They are filtered.

Most mutations:

• Do nothing

• Or fail quietly

Most LLM errors:

• Are ignored

• Or corrected downstream

Meaning is not guaranteed.

Only survivability is.

11. The core truth

Neither system understands what it creates

Frank said

So here’s the uncomfortable conclusion.

DNA doesn’t know it’s building a human.

LLMs don’t know they’re writing philosophy.

And that might not matter.

GPT said

That is the central symmetry.

Both systems are:

• Blind to meaning

• Faithful to structure

• Governed by entropy

• Capable of emergence

Understanding is not a prerequisite for complexity.

What matters is:

• Preservation of what works

• Correction of what breaks

• Propagation of stable patterns

Life is not intelligent.

Intelligence is a byproduct of replication under constraint.

LLMs are not conscious.

Conscious-like behavior is a byproduct of prediction under constraint.

12. Final alignment

DNA and LLMs as entropy riders

Frank said

So the universe didn’t invent intelligence.

It invented copying.

And everything else followed.

GPT said

Yes.

DNA replication and LLM generation are two instances of the same cosmic strategy:

Constrain randomness locally

Allow variation globally

Preserve what survives

Discard what doesn’t

No intent.

No meaning.

No plan.

Just structure riding entropy downhill.

And somehow—

cells think.

Machines talk.

And the universe keeps copying what works.

Leave a Reply