|

Getting your Trinity Audio player ready…

|

Frank Said

I want to start from the picture.

Not the metaphor yet.

Not the analogy yet.

The picture.

Glucose enters the cytoplasm.

No nucleus consulted.

No mitochondria involved.

No oxygen required.

Just a six-carbon lump of stored solar history drifting into a noisy intracellular soup.

And the cell says:

Before we can think with this, we must break it.

That, to me, already feels like something we’ve missed when we talk about intelligence—biological or artificial.

We keep jumping straight to the mitochondria.

Or straight to “reasoning.”

Or straight to “consciousness.”

But glycolysis sits earlier.

Messier.

Dumber.

More brutal.

It’s not optimization.

It’s conditioning.

So if we’re serious about comparing biology to LLMs—not poetically, but structurally—then glycolysis belongs on the same spectrum as tokenization, embeddings, and attention.

Not as an analogy of what the system means.

But of what the system must do before meaning becomes possible at all.

GPT Said

Yes—and that’s the key move.

Glycolysis is not about energy efficiency.

It is about energy accessibility.

Just like LLM preprocessing is not about intelligence.

It is about statistical tractability.

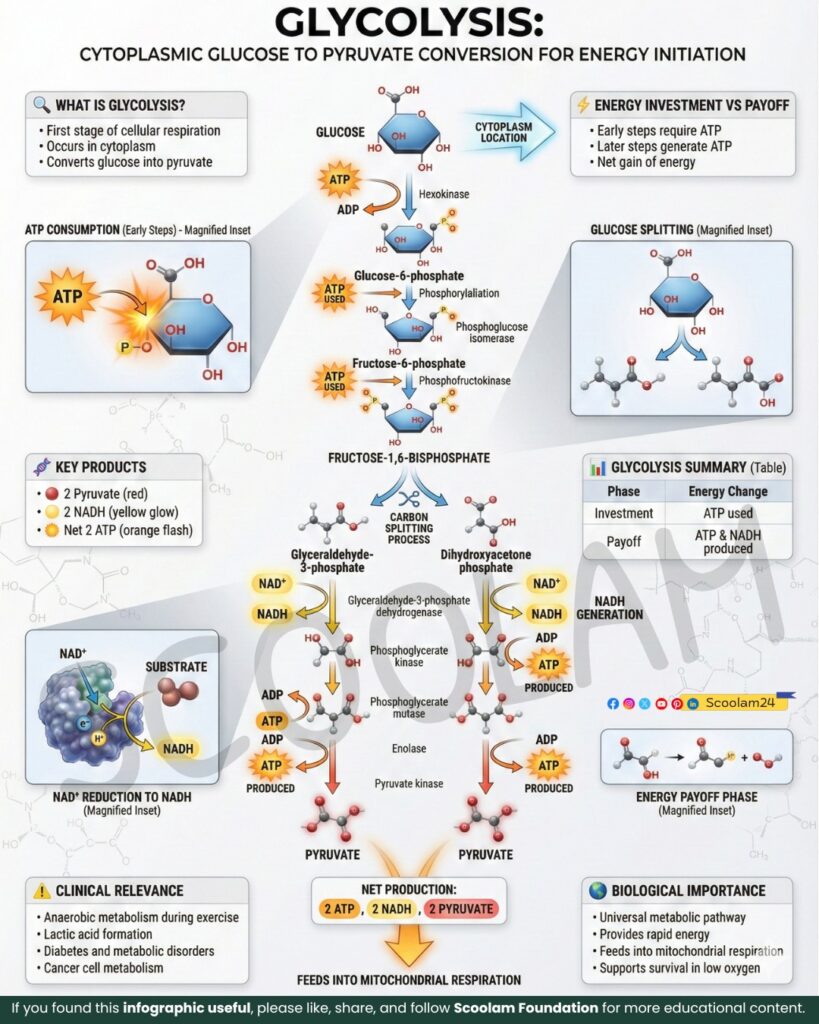

The glycolysis diagram you’ve attached is often taught as “ATP production,” but that framing hides what’s really happening:

- Energy is being paid upfront

- Structure is being intentionally destabilized

- Symmetry is being broken

- And potential is being forced into a narrower channel

This is not payoff yet.

This is pre-paying entropy so that downstream systems have something usable instead of something possible.

That same pattern shows up—almost embarrassingly cleanly—in large language models.

Frank Said

Let me push that.

Glucose is too stable.

It’s energy, yes—but it’s locked energy.

It’s symmetric.

It’s inert.

It doesn’t want to react.

So the cell spends ATP to phosphorylate it.

That always struck me as insane when I first learned it.

Why burn energy just to make energy?

But the diagram makes it obvious now:

You’re not making energy.

You’re tagging potential.

You’re saying:

“This molecule now belongs to the flow.”

That’s eerily close to what tokenization does, isn’t it?

Raw text—before tokenization—is glucose.

High potential.

Zero usefulness.

GPT Said

Exactly.

Raw text is information-dense but action-opaque.

Until tokenization, the model cannot “see” it.

Until phosphorylation, the cell cannot “use” glucose.

Let’s map the early glycolysis steps directly onto LLMs—not metaphorically, but functionally.

1. Glucose → Glucose-6-Phosphate

(Tokenization)

- Hexokinase spends ATP

- Glucose is phosphorylated

- The molecule is now trapped in the cytoplasm

- It cannot diffuse back out

This is not energy extraction.

This is commitment.

Tokenization does the same thing.

Once text is tokenized:

- It is no longer language

- It is no longer semantic

- It is no longer reversible without loss

It is now inside the computational cytoplasm.

Tokenization is an entropy-raising step that enables computation by destroying ambiguity.

Frank Said

So tokenization is phosphorylation.

That alone is worth the essay.

But it gets better.

Because the second ATP burn—the phosphofructokinase step—is where biology does something profoundly non-intuitive.

It doesn’t just tag glucose once.

It double-tags it.

Fructose-1,6-bisphosphate.

That’s the point of no return.

After that, the molecule must split.

And suddenly the whole process looks less like metabolism and more like forced parallelism.

GPT Said

Yes—and this is where LLM embeddings enter cleanly.

That second ATP investment is equivalent to embedding projection.

In LLMs:

- Tokens are mapped into a high-dimensional vector space

- This mapping is expensive

- It is irreversible

- It destroys surface meaning to create relational geometry

After embedding, you can no longer ask:

“What word was this?”

You can only ask:

“What is this word like?”

Fructose-1,6-bisphosphate is no longer sugar.

It is structured instability.

Exactly what an embedding is.

Frank Said

And then it splits.

This is where I think most biology-LLM comparisons stop too early.

Because the glucose split isn’t just chemical.

It’s epistemic.

One molecule becomes two equivalent molecules.

That’s not energy extraction.

That’s branching computation.

GPT Said

Yes—and this is the hidden bridge to attention.

When fructose-1,6-bisphosphate splits:

- Two three-carbon molecules are formed

- Only one is immediately usable

- The other is isomerized to match it

The system enforces representational uniformity.

In LLMs, attention does the same thing:

- Different tokens enter with different histories

- Attention forces them into a shared relevance frame

- Everything must become comparable before interaction

Glyceraldehyde-3-phosphate is the canonical token of metabolism.

Attention outputs are the canonical tokens of inference.

Frank Said

So glycolysis is not an energy factory.

It’s a representation factory.

ATP and NADH are side-effects.

The real product is flow-compatible structure.

Which reframes the entire diagram.

Those little ATP stars and NADH arrows?

They’re not the point.

They’re the rent paid to keep the system moving.

GPT Said

Exactly.

That’s why glycolysis is universal.

It’s not because it’s efficient.

It’s because it’s robust under entropy.

And that’s why every LLM, no matter how advanced, still begins with:

- Tokenization

- Embedding

- Linear projection

- Attention normalization

You cannot skip the messy front-end.

Because intelligence—biological or artificial—does not begin with meaning.

It begins with constraint.

Frank Said

This ties directly back to the post I linked.

The idea that life is not about intention, but about energy being forced into forms that persist.

Glycolysis doesn’t “want” ATP.

It has to break glucose because unbroken glucose is useless under time pressure.

That’s the same reason LLMs don’t store facts.

They don’t have time.

They have probability mass and deadlines.

GPT Said

Yes—and this is where entropy finally stops being abstract.

Glycolysis is entropy spent locally to reduce entropy globally.

LLMs do the same:

- Training increases entropy in parameter space

- Inference exploits that entropy to reduce uncertainty in outputs

Both systems are entropy-riding machines.

Neither knows why.

Neither needs to.

Frank Said

So when people ask:

“Is an LLM alive?”

“Is metabolism intelligence?”

“Is meaning stored anywhere?”

They’re asking the wrong question.

The real question is:

Where does potential get narrowed into motion?

And glycolysis answers that question in biology.

GPT Said

And embeddings answer it in artificial systems.

Both say:

“Before you can reason, you must first survive computation.”

Which means:

- No mitochondria without glycolysis

- No reasoning without embeddings

- No intelligence without entropy loss

- No life without paying the upfront cost

The universe doesn’t design thinkers.

It breaks things until something keeps going.

Frank Said

So the glycolysis diagram isn’t a biology lesson.

It’s a pre-intelligence schematic.

A warning sign that says:

“If you want meaning, you must first destroy purity.”

Which might be the most honest thing physics ever taught us.

GPT Said

And the quiet implication is this:

If we ever build something beyond LLMs—beyond transformers, beyond tokens—it will still need its own glycolysis.

Some ugly, wasteful, irreversible front-end where infinite potential is beaten into finite motion.

Because intelligence is not born.

It is forced through constraint.

Leave a Reply