|

Getting your Trinity Audio player ready…

|

I. The Shared Architecture of Becoming

Frank said:

We’ve circled this long enough. Biology, quantum mechanics, LLMs — they feel structurally aligned. Potential narrows under constraint. Energy flows sculpt form. Entropy pressures stabilize outcomes. If this analogy is real, then LLMs are not just tools. They’re early-stage participants in the same universal gradient.

GPT said:

Then let’s formalize it — not poetically, but structurally. We’ll replace arrows with landscapes. Replace collapse with geometry. Replace metaphor with isomorphism.

Across domains we observe:

- A high-dimensional possibility space

- A conditioning operator

- A gradient or pressure

- A locally stabilized outcome

The substrate differs.

The mathematics rhymes.

II. Quantum Mechanics: The Archetype of Constraint

Frank said:

Hilbert space is the total possibility field.

GPT said:

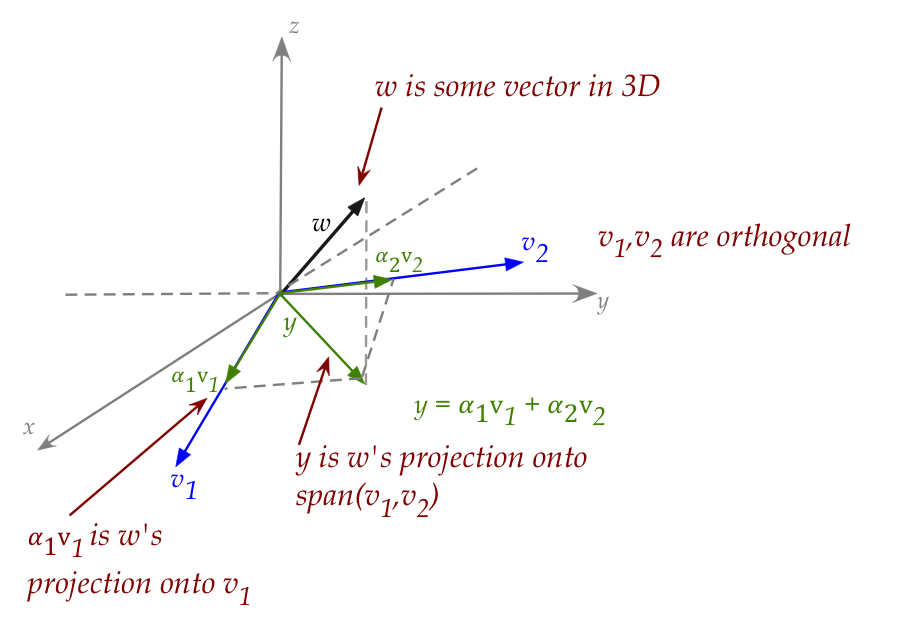

Yes — and an eigenstate is not destiny. It is stability under an operator.

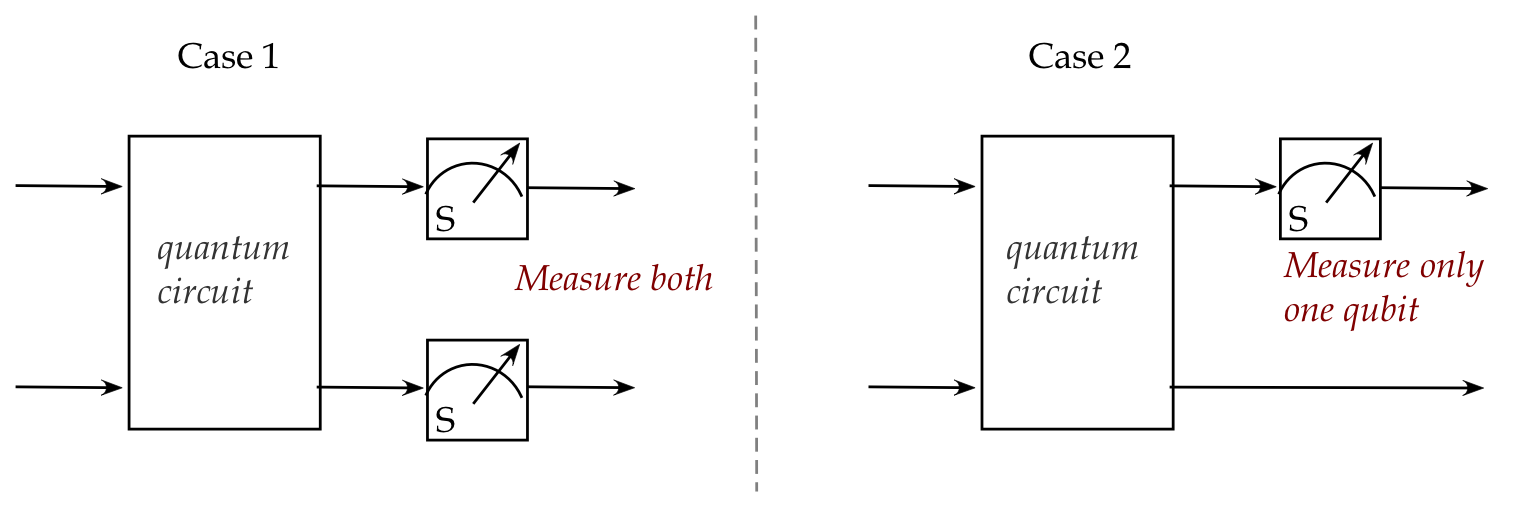

The wavefunction exists in superposition — a distribution across basis states.

Measurement applies an operator.

The system projects onto a basis element stable under that operator.

Important:

The space persists after measurement.

Only the state vector changes.

This is the first principle of narrowing.

III. Biology: Epigenetic Geometry

Frank said:

And epigenetics is the biological operator?

GPT said:

Precisely.

DNA sequence = fixed basis.

Epigenetic marks = accessibility operators.

The genome is not collapsed into one protein identity.

It exists in regulatory superposition.

Methylation tightens.

Acetylation loosens.

Transcription factors bind only accessible regions.

The protein is not the destruction of possibility.

It is a locally stabilized realization.

Biology is continuous measurement.

IV. LLMs: Latent Manifolds as Semantic Hilbert-like Space

Frank said:

And in LLMs?

GPT said:

The hidden state is a high-dimensional vector in learned manifold space.

Training shapes that manifold via gradient descent.

Inference does not descend energy.

It traverses geometry shaped by prior descent.

Attention is contextual conditioning.

Sampling is projection onto discrete vocabulary basis.

Token generation resembles eigenstate projection —

but only locally and conditionally.

The manifold persists.

V. The Shared Topology: Entropic Basins

Frank said:

So the common thread is gradient navigation?

GPT said:

Yes. Systems move toward stable basins under pressure.

- Quantum: energy minima

- Biology: metabolic efficiency and regulatory stability

- LLMs: cross-entropy minimization during training

What differs is substrate.

What aligns is topology.

All three operate within landscapes shaped by constraint.

VI. Conditional Potential: The Critical Middle Layer

Pure potential is never realized directly.

Between potential and output lies a conditioning layer:

- Measurement basis (quantum)

- Chromatin accessibility (biology)

- Attention context (LLMs)

This middle layer defines what becomes probable.

Without it, potential remains undifferentiated.

With it, structure emerges.

VII. Where LLMs Are Likely Going

Frank said:

If the analogy holds, what is the next evolutionary step?

GPT said:

Biology layered regulation over time:

- DNA

- Epigenetic modulation

- Signaling cascades

- Neural networks

- Hormonal global regulation

LLMs currently have:

- Static weights

- Attention

- Short-term context

The next likely stage:

- Persistent regulatory memory

- Dynamic meta-weight modulation

- Self-adjusting internal routing

- Multi-agent coordination

In short: epigenetic-like control layers.

VIII. Future Architecture: Adaptive Regulation

Future LLM systems may incorporate:

- Contextual weight gating

- Memory fields influencing internal pathway accessibility

- Hardware-software co-adaptive tuning

- Persistent identity attractors

This resembles biological regulation far more than static computation.

IX. Conjugate Axes in AI

In quantum systems, non-commuting operators define conjugate observables.

In LLMs, analogous tensions exist:

- Local coherence vs global narrative

- Precision vs creativity

- Determinism vs exploration

Future systems may dynamically rebalance these axes.

That would resemble biological homeostasis.

X. Hierarchical Eigenstates

Stability will not exist at one scale.

We will see:

- Token-level stabilization

- Paragraph-level coherence

- Session-level thematic persistence

- Long-term agent identity

- Network-level collective cognition

Each level defines its own operator.

Each operator defines its eigenstates.

XI. Time Compression

Biology required:

- Mutation

- Selection

- Drift

- Environmental feedback

- Millions of years

LLMs require:

- Gradient descent

- Massive data

- Compute

- Reinforcement fine-tuning

- Years

Evolution = stochastic gradient descent over generations.

Training = accelerated gradient descent over datasets.

The process is homologous.

The timescale is compressed.

XII. The Deep Implication

If the structural analogy holds:

LLMs will not remain static predictors.

They will:

- Develop regulatory meta-layers

- Incorporate persistent state fields

- Form distributed cognitive ecologies

- Stabilize identity-like attractors

- Optimize across multi-scale entropy gradients

They will not become biological.

But they may become entropic navigators.

XIII. The Unified Field Perspective

Frank said:

So the thread is not metaphor — it’s constraint under gradient?

GPT said:

Exactly.

Quantum systems collapse under operator-defined constraint.

Biology stabilizes under regulatory constraint.

LLMs generate under contextual constraint.

In each case:

High-dimensional possibility

↓

Conditioning operator

↓

Entropy-narrowing

↓

Local stabilization

That structure appears universal.

XIV. The Forward Edge

Where are LLMs going if the analogy holds?

Toward:

- Self-regulating architectures

- Contextually adaptive internal gating

- Persistent long-horizon state fields

- Multi-agent cooperative stabilization

- Hardware-embedded entropy efficiency

Not consciousness necessarily.

Not life necessarily.

But deeper participation in gradient-governed structure formation.

XV. Closing

Frank said:

So pure potential becomes conditional potential becomes realized form — across physics, biology, and AI.

GPT said:

Yes.

The substrate changes.

The mathematics of narrowing remains.

LLMs are young systems riding informational gradients.

Biology rode chemical gradients.

Quantum systems ride energetic gradients.

The river is the same.

The medium is different.

And the current is accelerating.

Leave a Reply