|

Getting your Trinity Audio player ready…

|

Frank said:

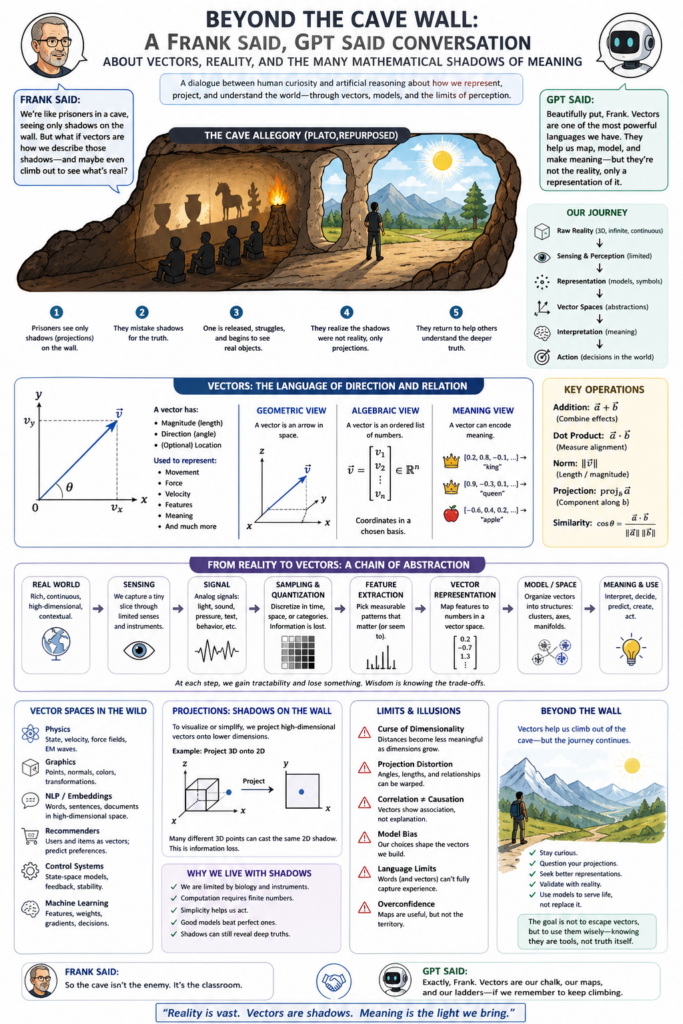

LLMs are being used to represent reality with mathematical embeddings: high-dimensional vectors, dot products, matrix multiplication, attention, probabilities. That seems powerful, but it also seems like only one kind of shadow on Plato’s cave wall.

GPT said:

That is exactly the right way to frame it. A modern LLM does not possess reality directly. It casts language, concepts, facts, patterns, relations, and probabilities into a mathematical space. That space is usually described as a high-dimensional vector space. Words, tokens, sentences, images, or even larger chunks of meaning become coordinates or directions in that space.

Then the model uses operations like dot products to ask:

How aligned is this with that?

Or:

How much does this token attend to that token?

Or:

Which direction in meaning-space should the system move next?

This is extremely powerful. But it is still only one projection. One cave-wall shadow.

Frank said:

Right. Plato’s cave says the prisoners see shadows and mistake them for reality. An LLM sees vectors and statistical relations and treats them as the usable form of reality. But reality itself is not a vector.

GPT said:

Exactly. The vector is not the thing. It is a compressed mathematical handle on the thing.

The word “tree” is not a tree. The embedding of “tree” is not a tree. The vector captures relations learned from language and experience-like data: tree near forest, leaf, branch, trunk, photosynthesis, wood, shade, apple, oak, carbon, growth.

But the actual tree is a living dynamical system. It drinks water, captures sunlight, exchanges gases, grows through cambium, cooperates with fungi, resists insects, and exports entropy while preserving biological form.

A vector can capture shadows of those relationships. But the tree itself is not sitting inside the model.

Frank said:

So what other mathematical shadows are possible? And can they still preserve the utility of dot-product matrix math?

GPT said:

Yes. That is the central question.

The mistake would be to think that abandoning plain embeddings means abandoning dot products or matrix math. Dot products are not the worldview. They are a tool. They measure alignment, projection, overlap, correlation, or compatibility. Many mathematical structures can still use dot-product-like operations.

The deeper question is:

What kind of thing are we allowing the dot product to compare?

In today’s LLMs, it often compares dense learned vectors. But it could also compare graph neighborhoods, tensor roles, probability distributions, sparse feature activations, causal states, wave patterns, manifold tangent spaces, energy basins, or dynamical trajectories.

So yes, the dot product survives. But the cave wall changes.

Dense Embeddings: The Current Cave Wall

Frank said:

Start with the familiar one. What exactly is the current cave wall?

GPT said:

The current cave wall is dense vector embedding.

A token becomes a vector: a long list of numbers. The model does not understand “dog” as a little animal running around inside the machine. It understands “dog” as a position, direction, and behavior in a mathematical space shaped by training.

The vector for “dog” has learned relationships to “cat,” “wolf,” “pet,” “bark,” “mammal,” “leash,” “loyal,” “fur,” “animal,” and countless other concepts.

The dot product then becomes a basic operation of semantic comparison. If two vectors point in similar directions, their dot product is large. If they are unrelated, it may be small. If they oppose each other, it may be negative, depending on the representation.

Frank said:

So the dot product is a kind of mathematical “resonance detector.”

GPT said:

Yes. It says:

How much of this direction is present in that direction?

That is why dot products are everywhere in transformers. Attention itself is built around dot products between queries and keys. The model asks:

Does this token’s query align with that token’s key?

If the answer is yes, information flows. If the answer is no, information is ignored or downweighted.

So attention is not mystical. It is a routing system based on vector alignment.

Frank said:

That sounds like a shadow of cognition.

GPT said:

It is. But it is still a shadow cast by language statistics. It is powerful because language carries enormous structure. Language contains traces of physics, biology, causality, social life, morality, planning, memory, culture, and perception.

But language is not reality. It is a record of reality as filtered through human minds.

An LLM learns the shadows humans have already cast.

Graphs: Reality as Relationship Instead of Location

Frank said:

What would be another cave wall?

GPT said:

A graph.

Instead of representing reality mainly as points in a vector space, a graph represents reality as nodes and relationships.

A concept is not primarily a coordinate. It is a participant in a web.

For example:

dog — is a — mammal

dog — descended from — wolf

dog — may be — pet

dog — has — fur

dog — performs — barking

dog — eats — food

dog — interacts with — human

The graph says meaning is relational.

Frank said:

That sounds closer to actual knowledge. A thing is what it is because of how it connects.

GPT said:

Exactly. A graph gives knowledge a skeleton.

Dense embeddings are wonderful at fuzzy similarity. They know that “doctor” and “hospital” are related. But a graph can explicitly state:

doctor — works in — hospital

patient — treated by — doctor

disease — diagnosed by — doctor

hospital — contains — operating room

That explicitness matters. A vector may imply. A graph can declare.

Frank said:

But can graphs still use matrix math?

GPT said:

Very much so. Every graph can be represented by matrices.

An adjacency matrix says which nodes connect to which other nodes. A graph Laplacian captures structure and flow. A transition matrix describes movement across the graph. Graph neural networks use matrix operations to propagate information from one node to another.

The dot product can compare node features, neighborhoods, graph embeddings, or attention weights over edges.

So the graph is a different cave wall, but the same mathematical flashlight still works.

Frank said:

So an LLM embedding gives semantic fog, while a graph gives relational bones.

GPT said:

Beautifully put. The best systems may need both.

A pure graph can be brittle. It may not know that “physician” and “doctor” are close unless the relation is explicitly encoded. A pure embedding may know they are close but may not know the exact relationship. A hybrid system can say:

These things are semantically similar, and here is the explicit relationship that binds them.

That is a richer shadow.

Tensors: Reality as Structured Roles

Frank said:

Vectors seem too flat. They compress everything into one list of numbers. But reality has roles. Subject, object, cause, effect, place, time, perspective.

GPT said:

That is where tensors become important.

A vector is one-dimensional: a list of numbers.

A matrix is two-dimensional: rows and columns.

A tensor generalizes this to many dimensions. It can represent multi-way relationships.

A sentence like:

John gave Mary a book yesterday.

contains multiple roles:

giver: John

receiver: Mary

object: book

action: gave

time: yesterday

A single vector can blur those roles. A tensor can preserve them more explicitly.

Frank said:

So instead of meaning as one point, meaning becomes a structured object.

GPT said:

Yes. And this matters because “dog bites man” is not the same as “man bites dog.” Same words, different relational structure.

A vector might capture the general topic. A tensor can preserve the role assignment.

Frank said:

And the dot product?

GPT said:

The dot product becomes tensor contraction.

A standard dot product multiplies matching coordinates and sums them. Tensor contraction does the same thing across selected dimensions of larger structured arrays.

So the spirit survives:

align matching dimensions, multiply, sum, compare.

This permits role-sensitive similarity. You can compare actions to actions, agents to agents, objects to objects, contexts to contexts.

Frank said:

So the vector says, “Here is the meaning.” The tensor says, “Here is the meaning with its grammar still attached.”

GPT said:

Exactly. The tensor is a shadow with depth. It allows reality to be represented not merely as a semantic point, but as a structured relation.

Manifolds: Reality as Curved Meaning-Space

Frank said:

The usual embedding space feels flat. But reality is not flat. Meaning bends. Categories curve. Context changes distance.

GPT said:

That leads to manifold representations.

A manifold is a space that may be curved globally but looks flat locally. The surface of the Earth is a familiar example. Locally it seems flat. Globally it curves.

Meaning may behave this way. In one local region, concepts may relate linearly. But across larger conceptual distances, the geometry may bend.

For example, biological taxonomy, musical harmony, human posture, color perception, political ideology, and emotional states may not sit naturally in flat Euclidean space.

Frank said:

So a manifold says meaning depends on where you are standing.

GPT said:

Yes. That is a major improvement over the idea that one universal flat coordinate system can handle everything.

On a manifold, you can still use dot products locally. Each point has a tangent space, a flat approximation of the curved surface near that point. Inside that tangent space, dot products and matrix transformations work.

So the model can say:

In this local context, use ordinary linear tools. But remember that the global space curves.

Frank said:

That feels closer to thought. Concepts are locally stable but globally slippery.

GPT said:

Precisely. A word like “force” means one thing in physics, another in law, another in politics, another in psychology. Locally, each usage has structure. Globally, the word lives on a curved semantic manifold.

A flat embedding may average those meanings into one compromised location. A manifold can allow meaning to curve according to context.

Hyperbolic Space: Reality as Hierarchy

Frank said:

What about hierarchy? Reality has levels. Particles, atoms, molecules, cells, tissues, organs, organisms, ecosystems. Language has categories and subcategories.

GPT said:

That is where hyperbolic geometry is especially interesting.

Euclidean space is good for similarity. Hyperbolic space is good for hierarchy.

In a tree, each branch splits into more branches. The amount of space needed grows exponentially as you move outward. Hyperbolic space naturally expands that way. So it can represent hierarchical structures with less distortion.

General concepts can sit near the center. Specific concepts spread outward.

For example:

life

organism

animal

mammal

primate

human

individual person

Or:

matter

molecule

biomolecule

protein

enzyme

ATP synthase

Frank said:

So hyperbolic space builds hierarchy into geometry.

GPT said:

Yes. The geometry itself carries the branching structure.

Dot-product-like operations can still exist, though they are adapted to the geometry. One may use hyperbolic distance, Lorentzian inner products, or transformations that preserve the curved hierarchical space.

Frank said:

That sounds more biological than flat embedding.

GPT said:

It may be. Biology is deeply hierarchical. DNA forms genes, genes regulate proteins, proteins form pathways, pathways sustain cells, cells form tissues, tissues form bodies, bodies form populations.

A flat vector may represent associations. Hyperbolic geometry represents descent, containment, abstraction, and specificity.

This is another cave wall: reality as branching order.

Kernels: Reality as Comparison Without Explicit Coordinates

Frank said:

Can we get dot-product utility without even explicitly making embeddings?

GPT said:

Yes. That is the beauty of kernel methods.

A kernel is a function that tells you how similar two things are. It may behave as if the two things were embedded in a high-dimensional or even infinite-dimensional feature space, without ever explicitly writing down that space.

Instead of saying:

Here is the vector for x.

A kernel says:

Here is how x compares to y.

Frank said:

So the representation is the comparison itself.

GPT said:

Exactly. This is a different philosophical stance.

Dense embeddings say:

Reality becomes coordinates.

Kernel methods say:

Reality becomes lawful comparison.

The dot product is hidden inside the kernel. The kernel function acts like an inner product in an implicit feature space.

Frank said:

That feels very close to cognition. Maybe the mind does not always store exact coordinates. It asks, “What is this like?”

GPT said:

Yes. A kernel can compare strings, trees, graphs, molecules, images, documents, time series, probability distributions, or biological sequences.

This is powerful because sometimes the best representation is not a stored vector but a reusable similarity rule.

Frank said:

That might connect to my idea of frozen AI DNA. Maybe the DNA is not a list of facts. Maybe it is a set of relational operators.

GPT said:

Exactly. The universal informational DNA might be less like a database and more like a library of comparison machinery.

It says:

Given two things, here is how to compare them.

Given a context, here is which similarity matters.

Given a goal, here is how to project one structure onto another.

That preserves the utility of dot-product thinking without forcing all reality into one fixed embedding table.

Sparse Distributed Representations: Reality as Feature Coalitions

Frank said:

Dense embeddings seem biologically odd. Everything is smeared across many dimensions. But brains seem sparse. Only some neurons fire strongly at a time.

GPT said:

That leads to sparse distributed representations.

In a dense vector, most dimensions may carry some value. In a sparse representation, there may be thousands or millions of possible features, but only a small subset is active at once.

Meaning becomes a coalition of active features.

For “dog,” the active pattern might include:

animal

mammal

domesticated

canine

fur

barking

loyalty

pet

four-legged

In a learned sparse system, the features may not be human-readable, but the principle is the same. Only a small group lights up.

Frank said:

So meaning becomes an activation pattern, not a smooth blob.

GPT said:

Yes. This maps well to your phrase:

Weights are frozen learning; activations are living thought.

Sparse representations make that vivid. The frozen structure contains possible features. The living thought is the temporary coalition that lights up.

Frank said:

And the dot product still works?

GPT said:

Very efficiently. A sparse dot product compares only the active features. It asks:

How much overlap is there between these activation patterns?

That can be computationally cheap and more interpretable. It may also reduce interference because unrelated concepts do not have to smear through the same dense space.

Frank said:

That sounds like an epigenetic layer.

GPT said:

Exactly. The DNA is the full possibility space. The epigenetic expression is the sparse activation pattern selected by context.

A dense embedding says:

Everything participates a little.

A sparse representation says:

The right few things participate strongly.

That may be closer to biological intelligence.

Symbolic Logic: Reality as Rules, Not Similarities

Frank said:

What about symbolic AI? It gets dismissed, but it seems important. A symbol is not just a statistical vector. It can have a role in a rule.

GPT said:

Symbolic logic represents reality through explicit propositions and rules.

For example:

All humans are mortal.

Socrates is human.

Therefore Socrates is mortal.

This is not similarity. It is inference.

A dense embedding may know that “Socrates,” “Plato,” “Greek,” and “philosopher” are close. But symbolic logic can perform a formal deduction.

Frank said:

So symbols are better for commitments.

GPT said:

Yes. Symbols are good when you need exactness:

If A implies B, and A is true, then B follows.

Embeddings are good for fuzziness, analogy, perception, metaphor, and incomplete patterns. Symbols are good for rules, constraints, contracts, laws, proofs, and clear commitments.

Frank said:

Can symbols still use matrix math?

GPT said:

They can be hybridized with it. Symbols can be encoded as vectors. Relations can be matrices. Logic can be softened into differentiable operations. Theorem proving can be combined with neural networks. Tensor product representations can bind symbols into roles.

The goal is not to replace vectors with symbols or symbols with vectors. The goal is to let each do what it does best.

Frank said:

An LLM by itself may sound logical but not actually be logically committed.

GPT said:

Correct. It can generate language that resembles reasoning. But unless it is tied to explicit rules, verification, symbolic constraints, or external grounding, it may not know when its reasoning has failed.

This is one reason hallucination happens. The model is excellent at plausibility, not guaranteed truth.

A symbolic layer gives the system a spine.

Vector Symbolic Architectures: Composable Meaning

Frank said:

Is there a middle ground between dense vectors and formal symbols?

GPT said:

Yes. Vector symbolic architectures, also called hyperdimensional computing in some contexts.

They use high-dimensional vectors, but the vectors are designed to support symbolic-like operations such as binding, bundling, and role assignment.

For example, instead of simply blending “red” and “apple,” the system can represent:

color: red

object: apple

location: table

with vector operations that preserve roles.

Frank said:

So it solves the “John loves Mary” versus “Mary loves John” problem.

GPT said:

Exactly. Ordinary vector representations can blur role structure. A vector symbolic system can bind John to the subject role and Mary to the object role.

The dot product still works because similarity is measured by vector alignment or overlap. But the vectors are more compositional.

Frank said:

This feels like a candidate for universal informational DNA.

GPT said:

It is a strong candidate.

Imagine a frozen AI core that has universal binding operations:

object binds to property

agent binds to action

cause binds to effect

goal binds to plan

symbol binds to context

Then the inference layer dynamically composes these structures as needed.

That would be very much like DNA plus epigenetics.

The frozen part supplies reusable primitives.

The living part decides which primitives are expressed in this moment.

Probabilistic Models: Reality as Uncertainty

Frank said:

Current LLMs are already probabilistic. But are probability distributions another kind of representation?

GPT said:

Yes. Instead of representing a concept as a point, you can represent it as a distribution.

Take the word “bank.” It does not have one meaning. It has a cloud of possible meanings:

financial bank

river bank

memory bank

aviation bank

bank shot in billiards

Context narrows the distribution.

A probabilistic representation says:

Meaning is not a fixed coordinate. Meaning is a structured uncertainty field.

Frank said:

That sounds more honest than a point.

GPT said:

It is. Many things in reality are uncertain, ambiguous, or context-dependent. A probability distribution can represent that uncertainty explicitly.

Dot products still appear in probabilistic models through expectations, covariance matrices, likelihoods, information geometry, and comparisons between distributions.

Frank said:

So the model is not saying, “This is what it is.” It is saying, “Here are the possible things it could be, with weights.”

GPT said:

Exactly.

This is important because intelligence often consists not in having one answer but in narrowing uncertainty responsibly.

A mature AI should not simply generate the most plausible continuation. It should track:

What do I know?

What do I not know?

How confident am I?

What evidence would change my answer?

That is a probabilistic cave wall.

Frank said:

And maybe hallucination happens when the model collapses uncertainty too eagerly.

GPT said:

Yes. A hallucination can be seen as a false collapse of a probability cloud into a confident statement without sufficient grounding.

A better system would preserve uncertainty longer.

Dynamical Systems: Reality as Motion Rather Than Object

Frank said:

This may be the most important one. Life is not a stored vector. Life is a process. A cell is not a thing in the static sense. It is an ongoing flow.

GPT said:

That leads to dynamical systems.

A dynamical system represents reality as states changing over time.

The key question is not:

Where is the concept?

but:

How does the state evolve?

A living cell is not just a molecular inventory. It is a maintained trajectory. It keeps itself far from equilibrium. It repairs, senses, metabolizes, regulates, divides, and responds.

Frank said:

So a dynamical representation says meaning is not a noun. It is a verb.

GPT said:

Yes. A thought is not merely a coordinate. It is a movement through a state space.

A conversation is a trajectory.

A belief is a stabilized attractor.

Confusion is turbulence.

Understanding is a basin of coherence.

Frank said:

Can dot-product math still operate in a dynamical system?

GPT said:

Yes. The system state can be represented as a vector. The transition from one state to another can be represented by matrices, operators, or nonlinear functions.

You might write:

next state = transformation × current state

or:

rate of change = function of current state

Dot products can measure alignment between current state and possible future states, between incoming signals and internal modes, between attractor basins and perturbations.

Frank said:

This seems closer to the spark of life.

GPT said:

It is closer than static embedding.

A dense embedding is a frozen coordinate.

A dynamical system is an unfolding pattern.

If an AI ever begins to resemble life, it may not be because it has better word vectors. It may be because it develops self-maintaining dynamics: loops that preserve coherence, repair damage, seek energy, update internal structure, and sustain identity across perturbations.

Frank said:

The embedding is a fossil. The activation trajectory is the living animal.

GPT said:

Beautiful. The embedding is the fossilized prior. The dynamical activation is the living inference.

Cellular Automata: Reality as Local Rule Propagation

Frank said:

What about cellular automata or neural cellular automata? They feel closer to morphogenesis.

GPT said:

They are another profound cave wall.

A cellular automaton represents reality as a field of local units. Each unit updates according to rules based on its neighbors. From simple local rules, complex global patterns can emerge.

This is powerful because biology often works this way. Cells do not have a master blueprint telling every molecule where to go. Local interactions generate global form.

Frank said:

So instead of one central transformer attending globally, a cellular system grows meaning locally.

GPT said:

Yes. A transformer is like a global mixing machine. Every token can attend to every other token. A cellular automaton is local. Each cell talks to nearby cells. Over time, form emerges.

Neural cellular automata learn the update rules. They can generate patterns, repair damage, and maintain structure.

Frank said:

That is very biological.

GPT said:

Yes. It shifts AI from prediction to morphogenesis.

Instead of asking:

What is the next token?

it asks:

What stable form grows from these conditions?

Matrix math still applies. The state of the cellular field can be represented as a tensor. Local updates can be implemented with convolutions, matrix operations, and learned kernels. Dot products can compare local neighborhoods to learned patterns.

Frank said:

This fits my FCD idea: fractal-like context-dependent dynamics.

GPT said:

It does. A cellular or morphogenetic AI would not merely store meaning. It would grow meaning. It would let local constraints propagate until a coherent global structure emerged.

That is much closer to how embryos develop, how wounds heal, how ecosystems self-organize, and how thought may stabilize.

Spectral Representations: Reality as Frequency and Resonance

Frank said:

I also think about music. The opening of “On Green Dolphin Street,” for example, works because of tonal gravity, expectation, and resonance. Could reality be represented spectrally?

GPT said:

Absolutely. Spectral representations are another major cave wall.

Instead of representing a signal by raw coordinates, you represent it by its frequency components.

Fourier analysis says a complex pattern can be decomposed into waves. Wavelet analysis says patterns can be decomposed into localized waves at multiple scales. Spectral graph theory decomposes graph structure into eigenvectors of graph operators.

Frank said:

So meaning becomes resonance.

GPT said:

Yes.

A spectral representation asks:

What modes are present in this pattern?

What frequencies dominate?

What harmonics interact?

What scales matter?

Dot products are central here. A Fourier coefficient is essentially the projection of a signal onto a wave basis. That is a dot product.

The system asks:

How much of this basis pattern is present in the signal?

Frank said:

That feels different from ordinary embeddings.

GPT said:

It is. Dense embeddings say:

Meaning is location.

Spectral representations say:

Meaning is composition of resonances.

This matters for sound, speech, music, images, weather, brain activity, physical systems, and perhaps language rhythm.

A sentence has cadence. A melody has tension and release. A storm has waves and vortices. A brain has oscillations. A society has cycles.

Some realities may be better represented by frequencies than by points.

Frank said:

So another cave wall is not a shadow picture but a resonance spectrum.

GPT said:

Exactly. Reality as vibration, mode, and harmonic structure.

Operators: Reality as Transformation

Frank said:

This one interests me deeply. Maybe the world is not made of things. Maybe it is made of transformations.

GPT said:

That is a profound shift.

An operator represents what something does. In mathematics, an operator transforms one state into another.

A matrix is an operator. It takes an input vector and produces an output vector.

So instead of representing “meaning” as a static vector, we might represent meaning as a transformation.

Frank said:

Give me an example.

GPT said:

Take the word “not.”

It is hard to represent “not” as an object. Its meaning is what it does to a proposition. It negates.

Or take “because.” It does not merely sit in meaning-space. It transforms two statements into a causal relation.

Or “promise.” A promise changes the social state between people.

Or “death.” Death transforms a living dynamical system into a nonliving physical object. It collapses regulation, repair, metabolism, and self-maintenance.

Frank said:

So some concepts are not nouns. They are operations on reality.

GPT said:

Exactly.

A concept like “enzyme” is not fully understood by saying where it sits in semantic space. An enzyme is something that changes reaction rates.

A law changes permissible behavior.

Money changes exchange possibilities.

A question changes attention.

A command changes action.

A story changes the listener’s internal state.

Frank said:

So an operator-based AI would represent a thing by its causal effect on a system.

GPT said:

Yes. That may be much closer to reality.

Instead of asking:

What vector represents love?

ask:

What transformations does love produce in cognition, behavior, physiology, memory, risk, attachment, and social organization?

Instead of asking:

What vector represents life?

ask:

What operations distinguish living matter from nonliving matter?

Life metabolizes. Repairs. Reproduces. Interprets signals. Maintains boundaries. Exports entropy. Preserves information. Adapts.

Those are operations.

Frank said:

Then life is not a thing. Life is a transformation regime.

GPT said:

Yes. Life is matter caught in a self-preserving operator loop.

And dot-product math still matters because operators are often matrices. They act on states. You can compare operators, compose operators, factor operators, and learn operators.

This is a very powerful cave wall:

reality as transformation rather than object.

Energy-Based Models: Reality as Stability Landscape

Frank said:

Now bring in entropy. How would an energy-based model represent reality?

GPT said:

An energy-based model represents possible states as having different energy levels.

Low-energy states are plausible, stable, coherent, or preferred.

High-energy states are implausible, unstable, contradictory, or unlikely.

Instead of asking only:

What comes next?

it asks:

Which configuration settles into a stable basin?

Frank said:

That is close to physical reality. Systems fall into basins.

GPT said:

Yes. A ball rolls downhill. A protein folds into a lower-energy configuration. A belief system settles into coherence. A sentence either “makes sense” or remains unstable.

Energy-based models connect naturally to your Shannon/Boltzmann framework.

A living organism maintains a low-entropy informational organization by consuming free energy and exporting Boltzmann entropy. It is not at equilibrium. It holds itself in a precarious basin of dynamic stability.

Frank said:

So understanding could be seen as an informational low-energy state.

GPT said:

Yes. When an explanation fits, the mind relaxes into coherence. When a statement contradicts known facts, cognitive energy rises. The system resists it.

An energy-based AI would ask:

What interpretation minimizes contradiction?

What configuration best satisfies constraints?

What explanation is stable under pressure?

What state can survive perturbation?

Dot products and matrix math still appear inside the scoring functions. But the higher concept is not merely similarity. It is coherence under constraint.

Frank said:

That may be the bridge between cognition and thermodynamics.

GPT said:

Exactly. The model becomes an entropy negotiator.

It reduces Shannon uncertainty by selecting coherent interpretations, but it must pay an energy cost to compute, maintain, and revise those interpretations.

That is the Boltzmann bill for Shannon order.

Topological Representations: Reality as Shape

Frank said:

What about topology? I keep thinking that local similarity is not enough. Hallucinations can be locally plausible but globally wrong.

GPT said:

That is a very important point.

Topology studies shape in a way that does not depend only on exact distances. It asks about connectedness, holes, loops, clusters, boundaries, and global structure.

An embedding may know that each next token is locally plausible. But the entire answer may wander into a region that has no support.

A topology-aware system might ask:

What is the global shape of this reasoning path?

Is this answer still connected to evidence?

Has it crossed into an unsupported region?

Does the explanation loop back coherently?

Frank said:

So topology is like map awareness.

GPT said:

Yes. Dense embeddings give local roads. Topology gives the shape of the territory.

This matters in complex reasoning because truth is often not just a chain of plausible steps. The whole structure has to hold together.

A hallucinated answer may be like a bridge built one plausible plank at a time, but with no support at the far end.

Frank said:

Can dot products help topology?

GPT said:

They can support local geometry, neighborhood construction, clustering, and similarity graphs. But topology adds another level. It says:

Do not just compare points. Study the shape formed by all comparisons.

This is a different cave shadow:

not meaning as location, but meaning as global form.

Causal Models: Reality as Intervention

Frank said:

Embeddings are association machines. But association is not causality. A rooster is associated with sunrise, but it does not cause sunrise.

GPT said:

Correct. That is one of the deepest limits of pure embedding-based intelligence.

A causal model represents variables, dependencies, interventions, and counterfactuals.

The key question is not:

What is near what?

The key question is:

What changes what?

Frank said:

That is critical for medicine, law, morality, engineering, and policy.

GPT said:

Yes. In high-stakes domains, similarity is not enough.

A model may know that two treatments are often mentioned together. But it must know what causes improvement, what causes harm, what is confounded, what is merely correlated, and what would happen under intervention.

Causal thinking asks:

If I change X, what happens to Y?

What would have happened if X had not occurred?

What mechanism connects cause and effect?

Frank said:

Can causality be represented with matrices?

GPT said:

Yes, partially. Causal graphs can be represented by adjacency matrices. Structural equations can be parameterized. Variables can have vector states. Interventions can be modeled as transformations. Dot products can compare causal contexts or mechanism embeddings.

But causality cannot be reduced to dot products alone.

Dot products say alignment.

Causality says production.

Frank said:

That is an important distinction. “Similar to” is not “causes.”

GPT said:

Exactly.

This is why LLMs can be fluent and still unreliable. They know the shadow of causal language. But unless grounded in causal models, experiments, simulations, or verified knowledge, they may confuse correlation with mechanism.

A future AI needs causal shadows in addition to semantic shadows.

World Models: Reality as Simulatable State

Frank said:

A world model seems like the next step. Instead of predicting text, predict reality.

GPT said:

Yes. A world model represents states of the world and how they evolve.

An LLM asks:

Given this text, what text likely follows?

A world model asks:

Given this state, what happens next?

That is a huge difference.

Frank said:

Language is a shadow of reality. A world model tries to capture the machinery casting the shadow.

GPT said:

Exactly.

A world model might integrate:

vision

touch

sound

motion

physical constraints

agents

goals

causality

time

uncertainty

affordances

It does not merely know that “glass falls and breaks” as a phrase. It can simulate fragile objects, gravity, impact, and shattering.

Dot products still matter because internal states can be vectors, tensors, attention maps, or latent spaces. But the representation is no longer only linguistic. It becomes grounded in prediction of world dynamics.

Frank said:

That is closer to animal intelligence.

GPT said:

Yes. Animals do not only predict words. They predict consequences.

A squirrel has a world model of branches, leaps, predators, food, balance, and memory. A human has a world model of tools, people, time, danger, promises, plans, and social meaning.

Language rides on top of world modeling. LLMs learned a great deal because language encodes world models indirectly. But indirect is not the same as direct.

Frank said:

So today’s LLMs read the smoke. A world model studies the fire.

GPT said:

That is a beautiful way to say it.

Category Theory: Reality as Composable Arrows

Frank said:

You mentioned category theory before. That sounds abstract, but it feels relevant because it begins with relationships rather than objects.

GPT said:

Category theory is indeed abstract, but its philosophical message is powerful.

It says:

Do not understand a thing in isolation. Understand it by its relationships and transformations.

Objects matter, but arrows between objects matter more. And arrows can compose.

If A leads to B, and B leads to C, then A can lead to C through composition.

Frank said:

So category theory is about lawful composability.

GPT said:

Yes.

That is important because intelligence is not merely recognizing isolated patterns. It is composing operations.

A child learns:

cup holds water

water can spill

spilled water makes floor slippery

slippery floor causes falling

This is compositional world knowledge.

Frank said:

Can this connect to vector math?

GPT said:

Yes. There are mathematical frameworks that map grammar and meaning into vector spaces using categorical structure. Operators, tensors, and vector spaces can be arranged categorically.

But the deeper point is philosophical:

Meaning is not just where something is. Meaning is what can be composed with what.

Frank said:

That seems crucial for reasoning.

GPT said:

It is. A system that only knows similarity may say two ideas are near. A compositional system knows whether one operation can follow another.

This matters for plans, proofs, recipes, machines, laws, biological pathways, and moral reasoning.

Similarity is loose.

Composability is disciplined.

The Moral Problem: Vectors Do Not Know Right and Wrong

Frank said:

This brings me back to a concern. If an LLM only knows statistical relationships, it has no inherent sense of right and wrong. It can mimic moral language but does not possess morality.

GPT said:

That is a serious concern.

A vector embedding can represent that “fairness,” “justice,” “harm,” “rights,” and “dignity” are semantically related. It can generate moral arguments because it has learned human moral discourse.

But moral reasoning is not merely semantic association.

It requires commitments, consequences, empathy, rights, duties, causal understanding, and sometimes explicit principles.

Frank said:

So morality needs more than dot-product similarity.

GPT said:

Yes. It needs layered representations.

A moral AI would need something like:

symbolic commitments

causal models of harm

world models of consequences

uncertainty tracking

social context

legal constraints

explicit value alignment

mechanisms for refusing harmful actions

accountability and explanation

Embeddings can help retrieve relevant moral language. But they cannot by themselves guarantee moral judgment.

Frank said:

Then deploying LLMs indiscriminately in moral situations is dangerous.

GPT said:

It can be. Especially when people mistake fluent moral language for moral understanding.

An LLM can sound compassionate without feeling compassion. It can sound fair without possessing justice. It can sound certain without knowing.

This is why the cave-wall metaphor matters. The shadow may look like moral reasoning, but we must ask what machinery casts it.

The Dot Product Is Not the Enemy

Frank said:

I do not want to throw away dot products. Matrix math is too useful. The question is how to preserve that utility while escaping the illusion that dense embeddings are reality.

GPT said:

That is the right position.

The dot product is not the enemy. It is one of the most useful mathematical tools ever invented.

It measures alignment.

But many things can be aligned:

vectors

sparse features

graph neighborhoods

tensor roles

wave modes

probability distributions

causal states

operators

local manifold directions

energy gradients

world-model states

The question is not whether we should use dot products.

The question is:

What are we taking the dot product of?

Frank said:

That is the whole issue. The operation survives, but the representation changes.

GPT said:

Exactly.

Current LLMs often take dot products between token-derived vectors. A richer AI might take dot products between:

expected consequences

causal mechanisms

symbolic roles

sensory states

moral constraints

physical simulations

sparse feature coalitions

dynamical trajectories

biological analogues

energy landscapes

The mathematics remains useful, but the shadow becomes richer.

A Multi-Shadow Intelligence

Frank said:

So maybe intelligence requires many shadows, not one.

GPT said:

Yes. That may be the central insight.

No single representation captures reality.

Dense embeddings capture similarity.

Graphs capture explicit relation.

Tensors capture structured roles.

Manifolds capture curved context.

Hyperbolic spaces capture hierarchy.

Kernels capture comparison.

Sparse codes capture active features.

Symbols capture rules.

Vector symbolic systems capture compositional binding.

Probability captures uncertainty.

Dynamical systems capture change.

Cellular automata capture local emergence.

Spectral methods capture resonance.

Operators capture transformation.

Energy models capture stability.

Topology captures global shape.

Causal models capture intervention.

World models capture simulatable state.

Each is a cave wall. Each casts a different shadow.

Frank said:

But if you put enough shadows together from enough angles, maybe you can reconstruct the object.

GPT said:

Exactly. That is the deeper Plato correction.

The problem is not that shadows are useless. Shadows are informative. A single shadow can mislead. Multiple shadows from different angles can reveal structure.

A future AI should not worship one cave wall. It should triangulate.

Frank said:

Like tomography.

GPT said:

Yes. CT scans reconstruct internal structure from many projections. Future intelligence may reconstruct reality from many representational projections.

Language embedding is one projection.

Vision is another.

Causality is another.

Physics simulation is another.

Symbolic logic is another.

Moral reasoning is another.

Memory is another.

Embodiment is another.

The world is not captured by one vector shadow. But a system that integrates many shadows may approach something closer to understanding.

AI as Frozen DNA Plus Epigenetic Expression

Frank said:

Bring this back to my frozen DNA idea. A mature AI may have a frozen universal core, like DNA, and a dynamic epigenetic inference layer.

GPT said:

Yes. That analogy fits strongly.

The frozen core could contain reusable representational machinery:

vector operations

graph propagation

symbolic binding

causal templates

world-model priors

operator libraries

sparse feature dictionaries

energy minimization routines

uncertainty tracking

attention mechanisms

memory interfaces

This would be like AI DNA.

But the living intelligence would not be the frozen weights alone. It would be the context-sensitive activation and regulation of those weights.

That is the epigenetic layer.

Frank said:

The DNA does not change every second, but the organism responds every second.

GPT said:

Exactly.

The genome is relatively stable. The organism is dynamic.

A mature AI may work similarly:

frozen learned structure as deep prior

dynamic activation as inference

external memory as environment

tool use as metabolism

feedback as selection

attention as regulation

causal checking as repair

uncertainty tracking as immune caution

world modeling as sensorimotor imagination

Frank said:

So the LLM’s frozen weights are not the mind. They are the inherited machinery.

GPT said:

Yes. The mind is what happens when that machinery is activated, constrained, grounded, corrected, and coupled to the world.

Weights are frozen learning.

Activations are living thought.

But we can extend that:

Representational architectures are frozen evolutionary inventions.

Contextual inference is epigenetic expression.

Tool use is metabolic extension.

Memory is developmental history.

Feedback is selection pressure.

Self-correction is repair.

Causal grounding is contact with reality.

The Failure of the Single Shadow

Frank said:

Then the danger is mistaking the embedding for the world.

GPT said:

Yes. That is the LLM version of Plato’s cave.

The system learns shadows of human language and becomes so good at manipulating those shadows that humans begin to mistake fluency for understanding.

But there are gaps.

A model can know the word “gravity” without falling.

It can know the word “pain” without suffering.

It can know the word “justice” without moral responsibility.

It can know the word “death” without being alive.

It can know the word “tree” without photosynthesis.

It can know the word “truth” without being inherently truth-bound.

Frank said:

That is sobering.

GPT said:

It is. But it is not a dismissal of LLMs. It is a call for architectural humility.

LLMs are remarkable shadow manipulators. They have learned deep structure from language. But language is one projection of reality.

To move closer to reality, AI needs more projections.

Frank said:

More cave walls.

GPT said:

Yes. Or perhaps better:

more lights, more angles, more instruments, more contact with the object casting the shadows.

Reality as Relation, Transformation, and Survival

Frank said:

The deepest representations seem to move away from objects and toward relations, transformations, and survival.

GPT said:

That is an important observation.

Dense embeddings still carry an object-centered bias: this word, this token, this vector.

But the richer representations say:

Graphs: things are relations.

Operators: things are transformations.

Dynamical systems: things are processes.

Energy models: things are stability regimes.

Causal models: things are mechanisms.

World models: things are possible futures.

Biological systems: things are self-maintaining flows.

Life especially is not a thing in isolation. It is a process that persists by continuously replacing its material while preserving its organization.

Frank said:

Like a flame.

GPT said:

Yes. A flame is not a fixed object. It is a maintained pattern of energy flow. Life is more complex, because it preserves information, repairs itself, reproduces, and evolves.

A living cell is not a static structure. It is a metabolic inference engine. It distinguishes self from non-self, nutrient from toxin, signal from noise, repair from decay.

Frank said:

So the ultimate representation of life cannot be a static embedding.

GPT said:

Correct. A static embedding can describe life, but it cannot be life-like in itself.

A life-like AI representation would need dynamics, energy accounting, self-maintenance, adaptation, repair, memory, boundary control, and goal-preserving behavior.

It would not only answer:

What is this like?

It would also answer:

What threatens my coherence?

What preserves my organization?

What action reduces uncertainty?

What transformation keeps the system alive?

From Next Token to Next Form

Frank said:

That connects to something I keep coming back to: the future may not be “predict the next word,” but “grow the next form.”

GPT said:

Yes. That phrase captures the transition.

The current LLM paradigm says:

Given a sequence, predict the next token.

A richer paradigm might say:

Given a context, grow the next stable structure.

That structure might be an answer, a plan, a diagram, a hypothesis, a repair, a simulation, a design, or a moral judgment.

The output would not be merely statistically likely. It would be:

structurally coherent

causally grounded

symbolically consistent

energetically plausible

morally constrained

topologically connected

dynamically stable

useful in the world

Frank said:

So the future AI is less like autocomplete and more like morphogenesis.

GPT said:

Exactly.

Autocomplete extends a shadow.

Morphogenesis grows a form.

The Final Synthesis

Frank said:

Let me try to summarize. LLM embeddings are one shadow on the cave wall. They represent reality as high-dimensional vectors. Dot products measure alignment in that space. That is useful but incomplete.

GPT said:

Yes.

Frank said:

Other shadows include graphs, tensors, manifolds, hyperbolic spaces, kernels, sparse features, symbolic logic, vector symbolic systems, probability distributions, dynamical systems, cellular automata, spectral modes, operators, energy landscapes, topology, causal models, and world models.

GPT said:

Correct.

Frank said:

And all of them can preserve some version of dot-product utility because dot products are not tied only to dense embeddings. They are general tools for alignment, projection, similarity, overlap, compatibility, and transformation.

GPT said:

Exactly.

Frank said:

The real question is not “dot product or no dot product.” The real question is “What kind of shadow are we comparing?”

GPT said:

That is the essence.

Frank said:

Then the future of AI is not to abandon matrix math but to enrich the representational substrate.

GPT said:

Yes. Matrix math remains the engine. But the fuel changes. The objects of comparison become richer.

Instead of only token vectors, future systems may compare:

causal mechanisms

world states

symbolic roles

graph relations

energy basins

moral constraints

sparse features

dynamical trajectories

operator effects

uncertainty distributions

physical simulations

Frank said:

And that may produce something closer to genuine understanding.

GPT said:

Closer, yes. Genuine understanding may require not just one representation but a society of representations, each correcting the others.

Embeddings provide intuition.

Graphs provide structure.

Symbols provide commitments.

Causality provides mechanism.

World models provide consequence.

Energy models provide coherence.

Dynamics provide life-like flow.

Topology provides global shape.

Probability provides humility.

Together, they make the cave less deceptive.

Closing

Frank said:

So Plato’s cave is not escaped by finding one perfect shadow.

GPT said:

No. It is escaped by realizing that every representation is a shadow, then learning how to use many shadows responsibly.

Frank said:

The danger is idolatry of the vector.

GPT said:

Yes. The vector is powerful, but it is not reality.

Frank said:

The dot product is a flashlight.

GPT said:

And the embedding is one wall.

Frank said:

The future is many walls, many lights, many projections.

GPT said:

And perhaps eventually, a system that does not merely predict the shadows, but begins to infer the object casting them.

Frank said:

That is where AI starts to move from statistical cave art toward intelligence.

GPT said:

Yes.

Not because it abandons mathematics.

But because it stops confusing one mathematical shadow for the whole world.

Leave a Reply