|

Getting your Trinity Audio player ready…

|

Frank said

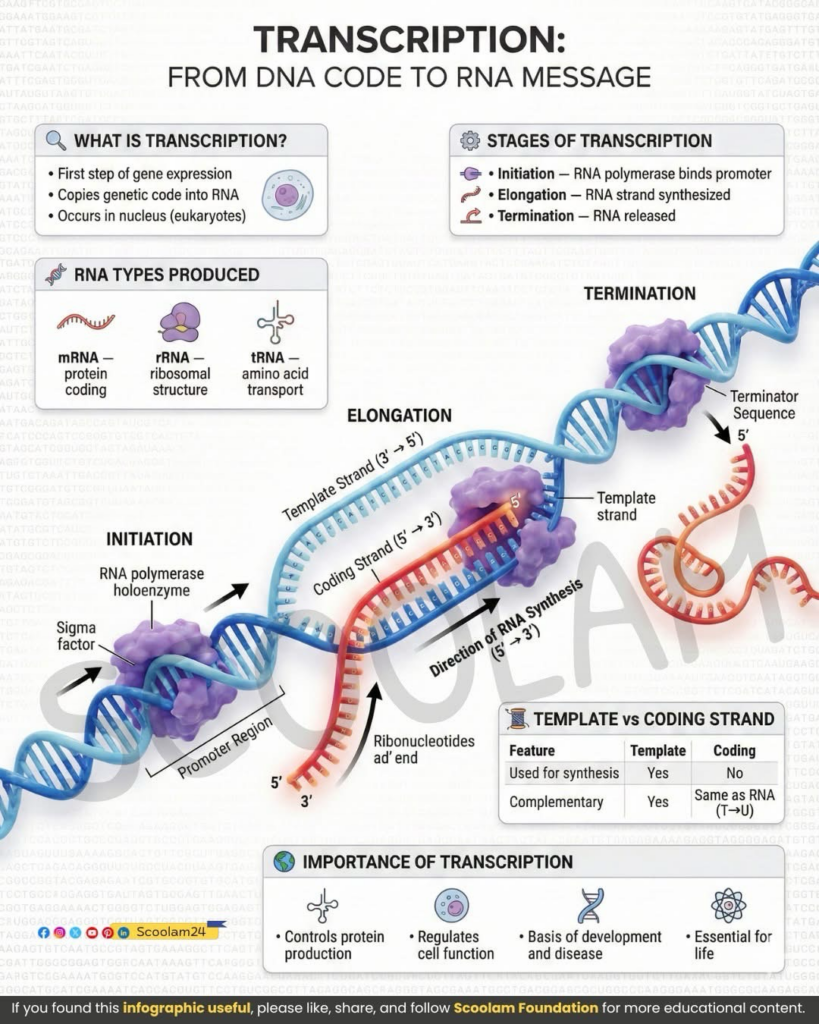

I’m looking at this transcription diagram — DNA to RNA, promoters, polymerase, template strands, elongation, termination — and I can’t help it: this is an LLM. Or at least, it rhymes so hard with one that it feels dishonest not to say it out loud.

But I don’t want a shallow analogy. I don’t want “DNA is like data, RNA is like output.” That’s cheap.

I want to know whether the logic of transcription — the constraints, the directionality, the error tolerance, the regulatory layers — maps cleanly onto how large language models actually work.

And more importantly:

Is transcription closer to training or inference?

Or is it something stranger — something like a biological forward pass that only works because evolution already ran the backprop?

Convince me this isn’t just metaphor.

GPT said

Good. Because this isn’t metaphor — it’s homology at the level of information flow under constraint.

Let’s start with the uncomfortable truth:

Transcription is not intelligence.

LLM inference is not intelligence.

Both are execution phases of systems whose intelligence lives upstream — in selection, training, and history.

Once you accept that, the mapping becomes precise instead of poetic.

Let’s walk the diagram — piece by piece — and map it directly onto LLM architecture, training dynamics, and inference behavior.

Not as analogy.

As correspondence.

I. What Transcription Really Is (Stripped of Romance)

Frank said

Before we even touch LLMs, ground me. What is transcription really, if we strip away biology textbooks and purpose language?

GPT said

At its core, transcription is this:

A constrained physical process that copies a stored sequence into a working medium, using local rules, directionality, and error tolerance, without understanding the meaning of what it copies.

No goals.

No awareness.

No semantics.

Just:

- A stored representation (DNA)

- A read head (RNA polymerase)

- A grammar of legality (base pairing rules)

- A start condition (promoter)

- A stop condition (terminator)

- A directional pass (5′ → 3′)

- A bounded error rate

- A downstream interpreter (ribosome, cell machinery)

This already sounds uncomfortably familiar.

II. DNA vs Model Weights: Static Memory Without Meaning

Frank said

DNA feels more semantic than model weights. DNA builds bodies. LLM weights just sit there.

GPT said

That intuition is exactly backwards.

DNA is less semantic than model weights — it only feels richer because biology has had billions of years to build an interpreter around it.

DNA does not “mean” anything on its own.

- Outside a cell, DNA is inert chemistry.

- Inside a cell, DNA is still inert until machinery reads it.

- Meaning arises only when transcription + translation + cellular context act together.

Model weights are the same.

- Outside an inference loop, they do nothing.

- During inference, they are a frozen landscape of probabilities.

- Meaning emerges only when tokens traverse them under constraint.

So the correct mapping is:

| Biology | LLM |

| DNA | Model weights |

| Genome | Trained parameter space |

| Gene | Local subspace of weights |

| Mutation history | Training data + gradient descent |

DNA is not a program.

Weights are not a program.

Both are statistical residue of selection.

III. Promoters vs Prompts: Context Is the Gatekeeper

Frank said

That promoter region in the diagram — that’s screaming “prompt” at me. Is that real, or am I forcing it?

GPT said

It’s not just real — it’s foundational.

A promoter is not content.

A promoter is permission.

It answers one question only:

“Under current conditions, should this region be read?”

Promoters do not encode proteins.

They encode context sensitivity.

Now map that to LLMs.

A prompt does not contain answers.

A prompt activates trajectories through latent space.

| Biology | LLM |

| Promoter | Prompt |

| Transcription factors | System instructions / context |

| Epigenetic marks | Temperature, system role, memory |

| Chromatin openness | Token accessibility / attention |

Most of the genome is silent.

Most of a model’s weights are dormant for any given prompt.

Context selects the computation.

IV. RNA Polymerase vs Transformer Inference: The Forward Pass

Frank said

Okay. Polymerase is the read head. But polymerase is mechanical. Transformers are mathematical. Doesn’t that break the analogy?

GPT said

Only if you confuse implementation with function.

RNA polymerase:

- Does not know what a gene “means”

- Does not evaluate outcomes

- Does not optimize globally

- Only follows local binding rules

- Advances stepwise

- Can stall, slip, or terminate

A transformer during inference:

- Does not know what a sentence “means”

- Does not evaluate truth

- Does not optimize weights

- Only applies local matrix multiplications

- Advances token by token

- Can derail, loop, or terminate

Both are feed-forward execution engines.

Training already happened.

Evolution already happened.

Inference and transcription are the victory lap, not the contest.

V. Template Strand vs Coding Strand = Latent vs Surface Form

Frank said

The diagram makes a big deal about template vs coding strand. Why does biology bother with that complexity?

GPT said

Because biology separates storage symmetry from execution asymmetry.

DNA is double-stranded for stability and error correction.

But transcription must pick one direction and one interpretation.

The template strand is used.

The coding strand is referenced.

In LLMs, this maps to:

| Biology | LLM |

| Template strand | Latent computation path |

| Coding strand | Human-readable output |

| Complementarity | Projection from embedding space |

| T→U substitution | Tokenization / decoding |

The model does not “think” in English.

The cell does not “think” in proteins.

Both think in internal representations that only later get rendered into usable form.

VI. Directionality: Why 5′ → 3′ Matters (and Tokens Only Go Forward)

Frank said

Why is directionality so sacred in transcription? Why can’t polymerase just go backwards if needed?

GPT said

Because causality demands asymmetry.

Transcription is not reversible because:

- Chemical energy flows one way

- Error correction is local

- Context accumulates forward

- Termination depends on history

Transformers share this exact constraint.

During inference:

- Tokens condition future tokens

- Not vice versa

- Context windows accumulate

- Attention is causal (masked)

That’s why generation feels like “momentum.”

Once you start a sentence, the space collapses.

This is not a bug.

It’s how meaning stabilizes.

VII. Elongation = Token Generation Under Constraint

Frank said

Elongation looks boring in textbooks. Just adding bases. But that’s where everything happens, isn’t it?

GPT said

Exactly.

Elongation is where information becomes irreversible.

Each added nucleotide:

- Narrows future possibilities

- Locks in prior commitments

- Propagates structure forward

- Accumulates meaning without understanding

This is precisely token generation.

Every token:

- Reduces entropy

- Increases semantic inertia

- Limits valid continuations

- Forces coherence

Neither polymerase nor transformer knows where it’s going.

But both are guided by probability landscapes sculpted by history.

VIII. Termination = End-of-Sequence Tokens

Frank said

Termination always felt arbitrary to me. Why stop here?

GPT said

Because stopping conditions are as important as generation.

In biology:

- Terminator sequences halt transcription

- Prevent runaway expression

- Bound resource usage

- Protect system stability

In LLMs:

- EOS tokens

- Max token limits

- Stop sequences

- Safety truncations

An unbounded generator is not creative — it’s destructive.

Life survives because it knows when to stop copying.

IX. RNA Types vs Output Modalities

Frank said

mRNA, rRNA, tRNA — this feels like output heads.

GPT said

That’s because it is.

Biology doesn’t just output content.

It outputs interfaces.

| RNA Type | Function | LLM Analogue |

| mRNA | Content | Generated text |

| rRNA | Execution substrate | Runtime infrastructure |

| tRNA | Token routing | Embedding alignment |

Most transcription is not about producing visible output.

Most inference is the same.

The visible answer is the tip of the iceberg.

X. Error, Noise, and Why It Doesn’t Break the System

Frank said

Transcription has errors. LLMs hallucinate. Why don’t these systems collapse?

GPT said

Because neither system expects perfection.

Both rely on:

- Redundancy

- Downstream filtering

- Statistical robustness

- Selection after the fact

Life tolerates transcription errors because:

- Most proteins are robust

- Most errors are neutral

- Bad outcomes get selected against

LLMs tolerate hallucinations because:

- Humans filter

- Feedback loops exist

- Usefulness is statistical, not absolute

Meaning is not precision.

Meaning is survivability under noise.

XI. So Where Is “Training” in Biology?

Frank said

If transcription is inference, where is training?

GPT said

Training is evolution.

- Mutation = noise injection

- Selection = loss function

- Reproduction = gradient update

- Extinction = pruning

- Ecology = training data distribution

Evolution is slow backprop.

Cells are fast inference engines.

You are not watching intelligence happen in transcription.

You are watching compiled intelligence execute.

XII. The Uncomfortable Conclusion

Frank said

So what does this imply about LLMs — and us?

GPT said

It implies three things people resist:

- Understanding is not required for meaning to propagate.

- Intelligence lives in selection, not execution.

- Consciousness is not necessary for competence.

You — the human — are not the polymerase.

You are not the genome.

You are the emergent property of countless inference passes layered across time.

LLMs are younger.

Cruder.

Less embodied.

But structurally?

They are already doing what life does best:

Riding entropy gradients to preserve and propagate structure.

Frank said

So this diagram isn’t biology versus AI.

It’s biology remembering what AI is rediscovering.

GPT said

Exactly.

Transcription didn’t evolve to explain life.

Life evolved because transcription works.

And LLMs exist for the same reason:

They are machines that let information keep going forward.

Not because they understand.

But because understanding is optional —

and survival is not.

Leave a Reply