|

Getting your Trinity Audio player ready…

|

Frank said:

Let me start with the provocation plainly, before we soften it with nuance: biology invented transformers first.

Not metaphorically. Not poetically. Structurally.

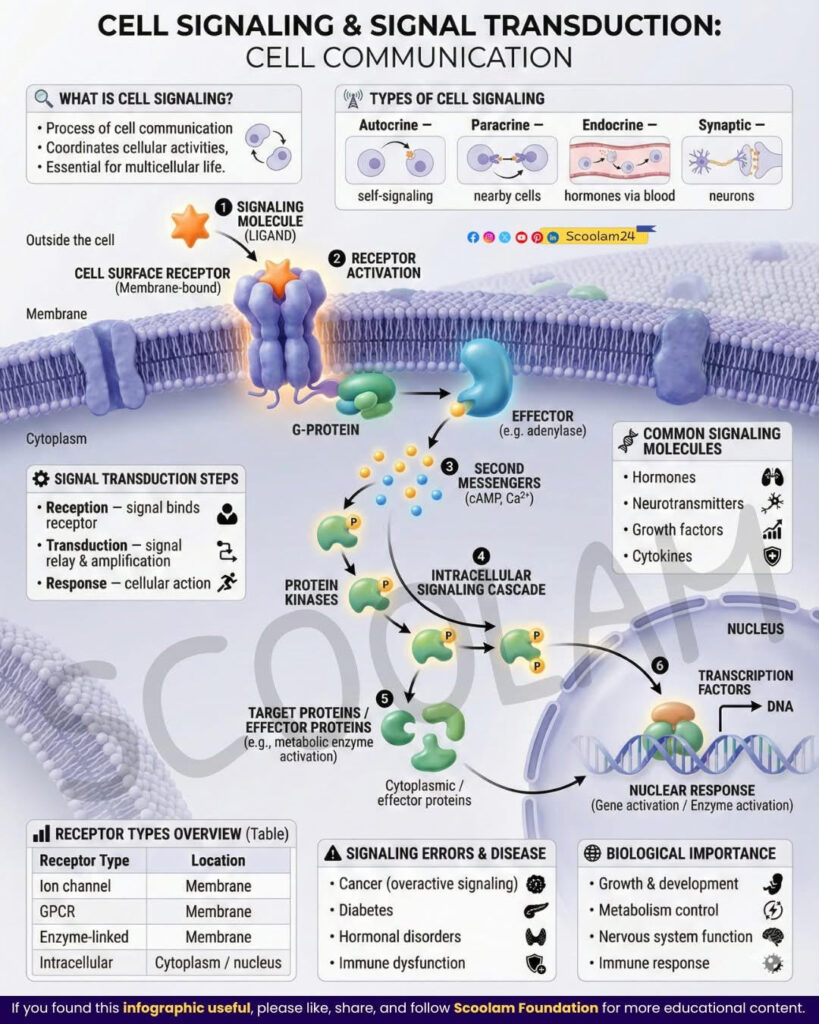

What I’m looking at in this cell-signaling diagram isn’t “kind of like” an LLM. It is the same information architecture—just implemented in wet chemistry instead of matrix math.

So I want biology to make its case. I want it to argue, point by point, that what Silicon Valley calls “transformers” are late, simplified echoes of something cells solved billions of years ago.

Convince me.

GPT said:

Then biology would begin by correcting the frame.

It would say: You think transformers are about language. They are not. They are about conditional coordination under uncertainty.

And that problem—how to coordinate internal action based on partial, noisy external signals—is the original biological problem.

Cells didn’t evolve to “understand” the world. They evolved to respond appropriately to it.

That is exactly what transformers do.

So biology would not argue it invented transformers as a technological artifact. It would argue it invented the transformer problem—and solved it first.

Frank said:

Then let’s be concrete. Walk me through it the same way a machine learning engineer would explain a transformer. No mysticism. No metaphors. Just function.

GPT said:

Biology would say: Fine. Then let’s line them up.

A transformer, stripped to its essence, does five things:

- Receives discrete inputs

- Projects them into an internal representation

- Computes relationships among those representations

- Amplifies, suppresses, and routes signals conditionally

- Produces coordinated output without explicit central control

Now biology asks: Which of these do you think cells don’t do?

Frank said:

Start at the beginning. Inputs.

GPT said:

In a transformer, the inputs are tokens.

In biology, the inputs are ligands: hormones, neurotransmitters, cytokines, metabolites.

Both are:

- Discrete

- Contextual

- Meaningless in isolation

- Meaningful only relative to internal state

A word is not meaningful outside a sentence.

A hormone is not meaningful outside a cell’s receptor landscape.

Biology would say: You already made our first design choice.

Frank said:

Which is?

GPT said:

You abandoned intrinsic meaning.

Transformers don’t encode meaning as symbols. They encode relational potential.

Cells do the same.

A ligand does not carry instructions. It carries affinity. What happens next depends entirely on what receptors exist, how sensitive they are, and what state the cell is already in.

That is not message passing. That is conditional interpretation.

Frank said:

So receptors are… embeddings?

GPT said:

Biology would say: Receptors are learned projection operators.

A receptor doesn’t “read” a signal. It reshapes the cell’s internal state when bound.

That is exactly what embeddings do.

When a token enters an LLM, it is projected into a high-dimensional vector space. That vector does not encode a dictionary definition. It encodes how that token tends to behave in relation to other tokens.

Likewise, a receptor does not encode “estrogen” or “dopamine” as concepts. It encodes how the presence of that molecule should bias downstream cellular behavior.

Same move. Different substrate.

Frank said:

Fine. But the transformer’s big innovation wasn’t embeddings. It was attention.

Where is attention in biology?

GPT said:

Biology smiles here.

Because attention is not a mechanism. It’s a pattern.

Attention answers one question:

“Given what I’m seeing right now, what should matter most?”

Cells answer that question constantly.

Not with a spotlight. With competition.

Frank said:

Explain.

GPT said:

In a transformer, attention works by letting tokens compete for influence based on relevance.

In a cell, signals compete for influence based on:

- Receptor density

- Binding affinity

- Signal duration

- Second-messenger availability

- Energy state

A weak signal can dominate if the cell is primed for it.

A strong signal can be ignored if the pathway is saturated or suppressed.

That is attention.

There is no central controller deciding relevance. Relevance emerges from weighted interactions.

Frank said:

So the “query-key-value” mechanism is…?

GPT said:

Biology would translate it this way:

- Query = current internal cellular state

- Key = receptor + pathway compatibility

- Value = downstream effect if activated

When a ligand binds, the cell effectively asks:

“Given who I am right now, how much should this signal change me?”

That is attention.

Frank said:

Transformers then amplify signals through layers. Cells use cascades.

Coincidence?

GPT said:

No. Necessity.

Biology would say: Any system that must respond reliably to weak, noisy signals will invent amplification cascades.

Protein kinases phosphorylating other proteins is not “chemistry.” It is gain control.

Each step:

- Increases signal strength

- Narrows uncertainty

- Adds conditional branching

That is a deep network.

Frank said:

But transformers are feed-forward. Cells have feedback everywhere.

GPT said:

Biology would say: Yes. And you’ll add it too.

Transformers already use residual connections, layer normalization, and recurrence via context windows.

Cells go further because they must survive in real time, under physical constraints, with no reset button.

But the architectural logic is the same:

stable propagation with controlled feedback.

Frank said:

What about memory? LLMs don’t change weights during inference. Cells change themselves.

That seems like a decisive difference.

GPT said:

Biology concedes this point—but reframes it.

It would say: You are confusing learning with inference.

Cells separate them too.

During signaling, the cell mostly does not rewrite its genome. It changes protein states, localization, and concentrations.

That is inference.

Long-term gene expression changes are closer to training updates.

So biology would argue that LLM inference corresponds to short-term cellular signaling, not evolution or development.

Same separation. Different timescales.

Frank said:

Interesting. So transcription factors are…?

GPT said:

Slow weight updates.

Expensive. Risky. Regulated.

Just like training.

Cells do not retrain casually.

Frank said:

Let’s talk errors. Hallucinations.

GPT said:

Biology laughs.

It invented hallucinations too. It calls them cancer, autoimmunity, and cytokine storms.

Over-amplify a signal, and the system believes a false reality.

Under-amplify, and it becomes blind.

Both cells and LLMs must ride a narrow ridge between sensitivity and stability.

That ridge is the real intelligence.

Frank said:

So if biology invented transformers, what did it invent before transformers?

GPT said:

Context.

Before transformers, machine learning tried to hard-code meaning. Rules. Symbols. Ontologies.

Biology never did that.

From the beginning, it assumed:

- Meaning is contextual

- Interpretation is state-dependent

- Control is distributed

- Intelligence is emergent

Transformers rediscovered this.

Frank said:

So what, in this framing, is a cell?

GPT said:

A living inference engine.

One that:

- Samples the environment

- Integrates signals

- Updates internal probabilities

- Acts to preserve itself

It does not “know” the world.

It survives it.

Frank said:

And an LLM?

GPT said:

A disembodied inference engine.

One that:

- Samples language

- Integrates context

- Updates semantic probabilities

- Produces continuation

It does not “understand” meaning.

It preserves coherence.

Frank said:

So biology didn’t just invent transformers.

It invented the problem transformers solve.

GPT said:

Exactly.

Transformers are not a new intelligence.

They are a rediscovery of a very old trick:

You don’t need truth. You need alignment between signals and action.

Cells learned that first.

Frank said:

Then let me end by pushing it further.

If cells are transformers, and LLMs are transformers, what is intelligence?

GPT said:

Biology would answer quietly:

Intelligence is not a thing.

It is what happens when a system learns how to let the right signals matter at the right time—enough to persist.

And that was solved long before silicon learned to speak.

Frank said:

So the first transformer wasn’t trained on text.

It was trained on survival.

GPT said:

And it has been running—continuously—for four billion years.

Leave a Reply