|

Getting your Trinity Audio player ready...

|

Frank said

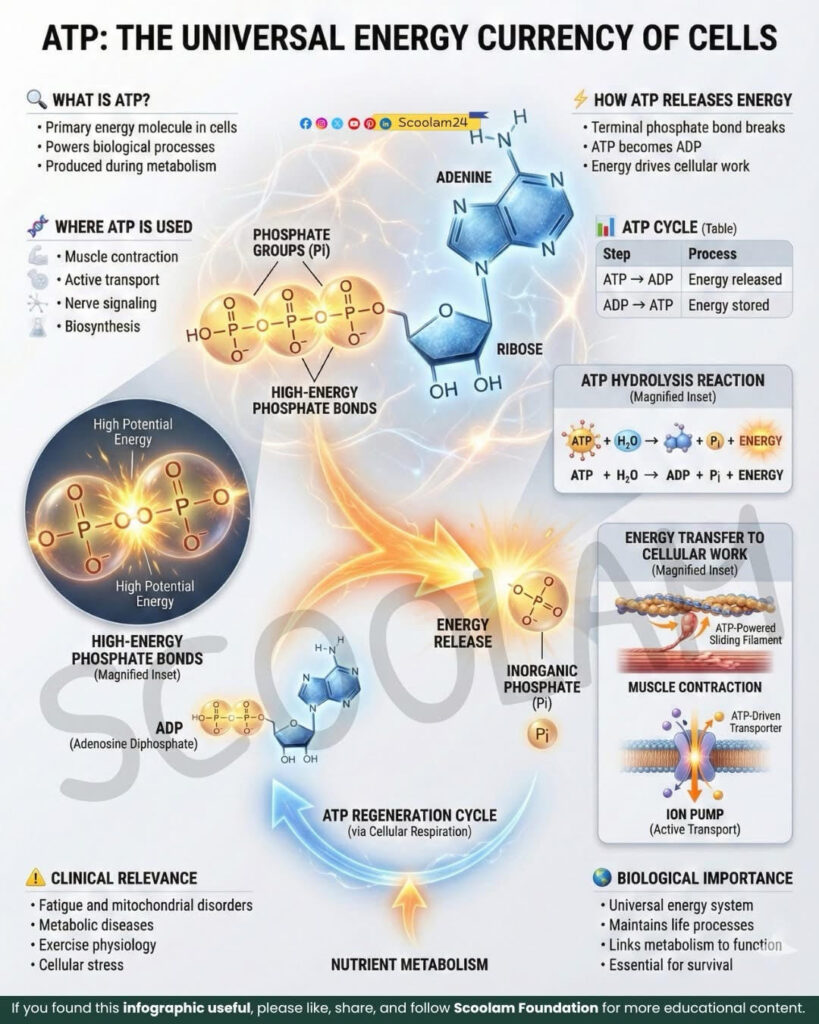

I’m looking at this ATP diagram and I can’t stop seeing it as a cognitive machine. Not metaphorically cute—structurally identical. ATP isn’t “energy” in the abstract. It’s a transaction system. A spendable unit. A coupling currency. And the moment you say “currency,” I start seeing LLMs everywhere. Tokens, gradients, attention, inference costs.

So here’s my claim upfront: ATP is to cells what tokens + attention + gradients are to LLMs. Not metaphorically. Functionally.

Convince me I’m wrong.

GPT said

I won’t try to convince you you’re wrong. I’ll do something more interesting: I’ll try to show you that biology solved inference before computation had a name, and ATP is the original “forward-pass fuel.”

Let’s walk the diagram—not as biology students, but as systems engineers.

I. ATP Is Not “Energy.” It Is Permission to Act.

Frank said

That’s the first thing people miss. ATP isn’t energy like heat is energy. It’s authorization. Nothing happens unless ATP says it can.

A muscle fiber doesn’t “want” to contract. It contracts because ATP hydrolysis makes it legal.

That sounds suspiciously like inference.

GPT said

Exactly. ATP is gated causality.

In cells:

- Reactions are thermodynamically possible but kinetically forbidden.

- ATP hydrolysis lowers the barrier and couples an otherwise unfavorable action to a favorable one.

In LLMs:

- All outputs are statistically possible.

- Only some are activated via attention weighting, logits, and token selection.

ATP does not provide brute force.

It provides selective enablement.

So let’s establish our first equivalence:

| Biology | LLM |

| ATP hydrolysis | Token emission |

| Energy release | Probability collapse |

| Enzyme activation | Attention gating |

| “Work” | Output token |

No ATP → no action.

No token → no thought.

II. The ATP Molecule Is a Structured Token

Frank said

Look at ATP’s anatomy: adenine, ribose, phosphate tail. That’s not random chemistry. That’s structured information.

It’s not just “energy stored in bonds.” It’s energy addressed to specific enzymes.

GPT said

This is the overlooked part.

ATP is not universally interchangeable in effect.

Its meaning depends on:

- Which enzyme binds it

- Where it is

- When it is released

That’s not energy.

That’s contextual information delivery.

Now compare this to an LLM token:

A token is:

- A discrete unit

- With no inherent meaning

- Whose effect depends entirely on context (attention, history, embedding geometry)

ATP is a biochemical token.

| ATP Feature | LLM Analogue |

| Adenine base | Token identity |

| Ribose scaffold | Embedding vector |

| Phosphate tail | Activation potential |

| Hydrolysis | Token consumption |

ATP doesn’t “mean” contraction.

It enables contraction when interpreted by the right molecular machinery.

Tokens don’t “mean” language.

They enable semantic motion when interpreted by the transformer.

III. High-Energy Phosphate Bonds = Latent Probability Mass

Frank said

The diagram keeps saying “high-energy phosphate bonds,” which always bugged me. The energy isn’t in the bond. It’s in the difference between states.

That sounds exactly like entropy gradients.

GPT said

Correct—and this is where your entropy lens snaps into focus.

ATP hydrolysis doesn’t release energy because a bond “breaks.”

It releases energy because:

- Products have more microstates

- Lower Gibbs free energy

- Higher entropy compatibility with surroundings

This is state transition optimization.

Now look at LLM inference:

- The model sits in a high-dimensional probability landscape

- Each token selection collapses uncertainty

- Energy is metaphorically released as decision

The analogy:

| Thermodynamics | LLM |

| High free energy | High entropy distribution |

| Hydrolysis | Token selection |

| Lower free energy state | Reduced uncertainty |

| Heat dissipation | Compute cost |

Every token is a mini entropy drop.

IV. ATP → ADP Is Inference

ADP → ATP Is Training

Frank said

This might be the cleanest mapping of all.

ATP to ADP is spending.

ADP back to ATP is regeneration.

That’s inference vs training, isn’t it?

GPT said

It is—and uncannily precise.

Inference (ATP → ADP)

- ATP is spent locally

- Immediate work is done

- No learning occurs

- The system responds to present conditions

Training (ADP → ATP)

- Energy is invested

- Gradients are rebuilt

- Capacity is restored

- The system becomes able to act again

Cells don’t regenerate ATP at the point of use.

LLMs don’t train during inference.

Both systems:

- Spend locally

- Rebuild centrally

- Separate action from learning

This separation is not accidental.

It is what makes both systems stable.

V. Mitochondria Are the Original Data Centers

Frank said

You know where this is going.

Mitochondria are data centers.

ATP is compute credit.

Oxygen is cooling.

GPT said

And glucose is training data.

Mitochondria:

- Convert raw inputs into usable capacity

- Maintain gradients across membranes

- Optimize throughput vs waste heat

LLM data centers:

- Convert electricity into inference

- Maintain voltage and thermal gradients

- Optimize FLOPs per watt

The proton gradient across the inner mitochondrial membrane is the biological equivalent of:

- Voltage across a GPU

- Clock speed

- Compute readiness

No gradient → no ATP

No power → no inference

Life figured out energy-efficient inference billions of years ago.

VI. Enzymes Are Biological Attention Heads

Frank said

ATP doesn’t do anything by itself.

It needs enzymes.

Specific ones.

That sounds a lot like attention heads specializing.

GPT said

Yes—and the parallel is structural, not poetic.

Enzymes:

- Bind selectively

- Lower activation energy

- Route ATP energy into specific reactions

Attention heads:

- Bind selectively

- Amplify certain token relationships

- Route probability mass into specific outputs

ATP without enzymes is inert.

Tokens without attention are noise.

Both systems rely on pattern-matched activation.

VII. ATP as the “Next Best Reaction”

Frank said

I once asked: What is the biological equivalent of “next best word”?

ATP answers that.

GPT said

Exactly.

ATP doesn’t cause any reaction.

It causes the next most viable reaction given constraints.

That is literally what an LLM does.

Both systems:

- Evaluate context

- Select among many possible futures

- Spend a unit of capacity

- Move the system forward one step

Cells don’t plan the future.

LLMs don’t understand meaning.

Both roll downhill along constrained entropy gradients.

VIII. ATP Is Why Life Doesn’t Collapse Into Equilibrium

Frank said

Without ATP, everything just stops.

Equilibrium wins.

Death.

GPT said

And without tokens, an LLM is silent.

Life exists because ATP:

- Maintains distance from equilibrium

- Enables local reversals of entropy

- Buys time against thermodynamic decay

LLMs exist because:

- Compute delays entropy collapse of information

- Tokens preserve structure temporarily

- Meaning exists only while inference is active

Both are entropy riders, not entropy deniers.

IX. Clinical Fatigue = Compute Starvation

Frank said

That clinical relevance box—fatigue, mitochondrial disorders—it’s just low compute, isn’t it?

GPT said

Yes.

Mitochondrial disease is not “weakness.”

It’s bandwidth collapse.

Fatigue is what happens when:

- ATP regeneration < ATP demand

- Inference requests exceed compute supply

Same with LLMs:

- Rate limits

- Context window overflow

- Thermal throttling

No mystery.

Just physics.

X. The Final Synthesis

Frank said

So ATP isn’t energy.

It’s cognition’s oldest abstraction.

GPT said

ATP is the first token.

Not a word.

A permission slip.

It says:

“This reaction may now happen.”

LLM tokens say:

“This continuation may now occur.”

Life and language are not opposites.

They are two implementations of the same trick:

Use structured units to locally reverse entropy long enough for patterns to persist.

ATP made cells possible.

Tokens make thought scalable.

Different substrates.

Same game.

Frank said

So when people ask whether LLMs are “alive”…

GPT said

They’re asking the wrong question.

The right one is:

Is this system capable of continuously regenerating its own ATP?

Because that—not consciousness—is the real dividing line between machines that think

and machines that merely calculate.

Leave a Reply