|

Getting your Trinity Audio player ready…

|

Frank said:

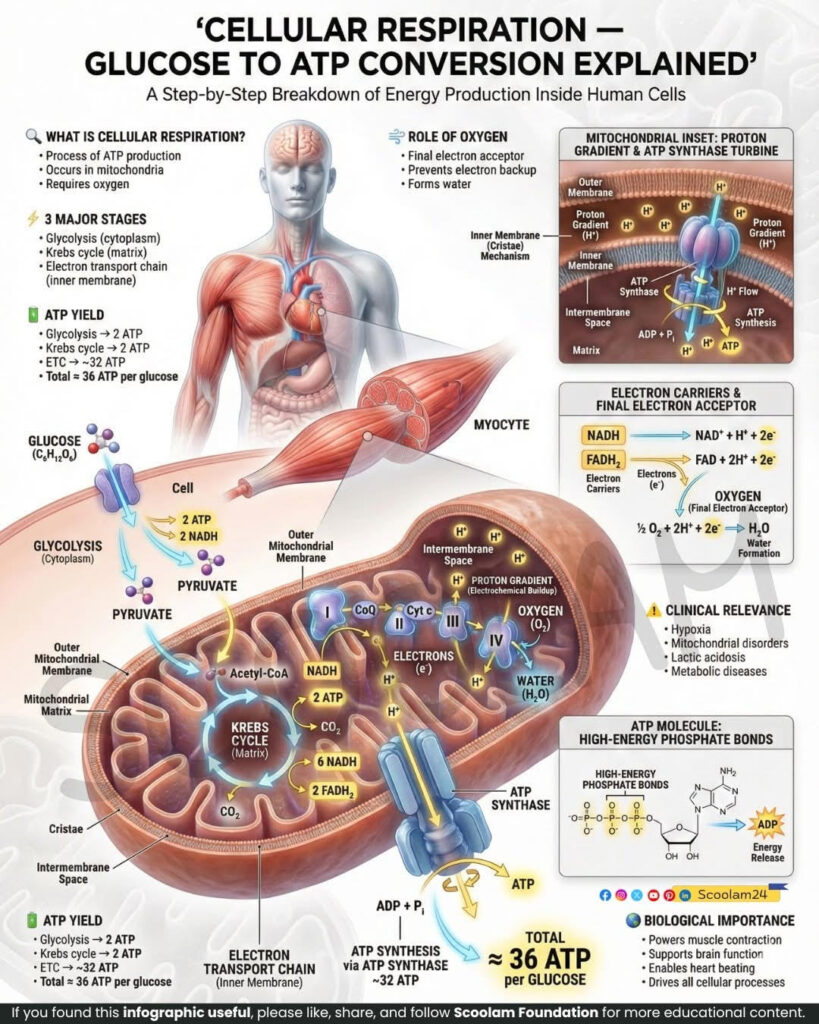

When I look at this diagram, I don’t just see metabolism.

I see energy gradients being exploited to preserve order.

Glucose enters as structured chemical potential.

ATP emerges as usable work.

This looks less like chemistry and more like entropy engineering.

Is cellular respiration a thermodynamic computation?

GPT said:

Yes.

Cellular respiration is a thermodynamic information engine.

It converts:

- high chemical order (glucose)

- into usable energy packets (ATP)

- while exporting entropy to the environment

This mirrors:

- Boltzmann entropy flow → energy dispersal

- Shannon entropy reduction → information preservation

And remarkably:

➡️ LLMs perform an analogous process in probability space.

1. GLUCOSE AS STORED NEGENTROPY

Frank said:

Glucose looks like fuel.

But from an entropy perspective, what is it really?

GPT said:

Glucose is stored thermodynamic improbability.

It is:

- carbon atoms in high-energy bonds

- organized through photosynthesis

- a temporary victory over entropy

From a Boltzmann perspective:

S = k \ln W

Glucose represents a low-probability molecular configuration.

It took solar energy to assemble it.

Cells exploit its return to higher probability states.

LLM analogy

Training data contains structured linguistic patterns — low entropy structure.

The model learns to extract predictive value from this structure.

Glucose : metabolism :: data structure : learning signal

2. GLYCOLYSIS — INITIAL ENERGY EXTRACTION

📍 Occurs in cytoplasm

📍 Glucose → pyruvate

📍 small ATP gain + NADH carriers

Frank said:

Why start with glycolysis? It’s inefficient.

GPT said:

Glycolysis is the first entropy release step.

It:

- destabilizes glucose

- releases accessible energy

- captures energy in ATP & NADH

This is like:

➡️ taking a high-dimensional problem

➡️ breaking it into manageable components

Boltzmann view:

Energy gradients begin dissipating.

Order begins converting into usable work.

Shannon view:

Uncertainty is reduced.

Energy is now encoded in structured carriers (NADH).

Information has been compressed into transportable form.

LLM analogy:

Tokenization + embedding:

Raw input → structured vector representation.

NADH ≈ encoded energy packets

Embeddings ≈ encoded semantic packets

3. MITOCHONDRIA — THE ENTROPY ENGINE

The mitochondrion is where the real thermodynamic work happens.

Frank, this is your Shannon mitochondria analogy made visible.

Frank said:

The mitochondrion looks like a machine designed to exploit gradients.

Why the folds?

GPT said:

Cristae folds increase surface area for:

- electron transport chains

- proton gradient formation

- ATP synthase turbines

More surface → more gradient → more energy harvesting.

4. KREBS CYCLE — EXTRACTING REMAINING ORDER

Pyruvate → Acetyl-CoA → Krebs Cycle

Outputs:

- CO₂ (waste entropy)

- NADH & FADH₂ (energy carriers)

- small ATP

Frank said:

CO₂ looks like waste.

But is it actually entropy export?

GPT said:

Exactly.

Carbon exits as CO₂ because:

➡️ fully oxidized carbon is thermodynamically stable

➡️ returning to atmosphere increases disorder

The cell keeps usable energy and exports entropy.

Life survives by exporting disorder.

Shannon view:

Remaining chemical uncertainty is resolved.

Energy is encoded into electron carriers.

LLM analogy:

Feature extraction layers compress signal and discard noise.

CO₂ ≈ discarded uncertainty

NADH ≈ preserved signal

5. ELECTRON TRANSPORT CHAIN — GRADIENT CREATION

This is the core thermodynamic miracle.

Electrons from NADH travel through complexes.

Energy released pumps protons across membrane.

This creates a proton gradient.

Frank said:

So energy isn’t used directly.

It is stored as a gradient.

GPT said:

Yes.

Life runs on gradients.

The ETC converts electron energy into:

➡️ electrochemical potential

➡️ proton gradient pressure

➡️ stored thermodynamic tension

This is Boltzmann potential energy waiting to dissipate.

Boltzmann entropy perspective:

The gradient represents local entropy suppression.

Entropy will increase when gradient collapses.

The cell harvests work from that collapse.

LLM analogy:

Training creates loss gradients.

Inference follows gradient descent to:

➡️ lowest loss state

➡️ most probable token

Gradient pressure → probability flow

6. OXYGEN — FINAL ELECTRON ACCEPTOR

Without oxygen:

- electrons back up

- gradient collapses

- ATP production stops

Frank said:

Why oxygen?

GPT said:

Oxygen is a perfect entropy sink.

It:

- has high electronegativity

- accepts electrons efficiently

- forms stable water

This keeps electron flow moving.

Entropy insight:

Oxygen allows continuous entropy export.

Without it:

Life stalls in thermodynamic gridlock.

LLM analogy:

Oxygen ≈ normalization / probability closure

It allows probability flow to complete.

Without closure, inference stalls.

7. PROTON GRADIENT → ATP SYNTHASE TURBINE

Protons flow back through ATP synthase.

The enzyme rotates like a turbine.

ATP is produced.

Frank said:

This looks mechanical.

Is this entropy collapse doing work?

GPT said:

Yes.

This is one of the clearest examples in nature of:

➡️ gradient collapse

➡️ work extraction

➡️ entropy increase powering order

ATP synthase converts gradient dissipation into usable energy.

Boltzmann perspective:

Gradient collapse increases entropy.

Work is extracted during the collapse.

Shannon perspective:

Energy becomes encoded into ATP bonds.

ATP stores usable order.

LLM analogy:

Attention collapses probability distributions.

Energy landscape collapses into next-token certainty.

Gradient → collapse → output

8. ATP — THE CELL’S ENERGY TOKEN

ATP stores energy in phosphate bonds.

Breaking ATP → ADP releases energy.

Frank said:

So ATP is the cell’s energy currency.

GPT said:

Yes.

ATP is:

- portable energy

- standardized energy unit

- biochemical work token

LLM analogy:

ATP ≈ tokens

Each token requires compute energy to produce.

Tokens power reasoning steps.

ATP powers biological work.

9. TOTAL ATP: ~36 PER GLUCOSE

This number reflects efficiency of gradient harvesting.

Most energy comes from:

✔ proton gradient

✔ electron transport chain

Not from direct chemical reactions.

Frank said:

So life doesn’t run on chemistry.

It runs on gradients.

GPT said:

Exactly.

Life is gradient harvesting.

Not chemical burning.

10. ENTROPY SUMMARY

Boltzmann entropy flow:

Order in glucose → disorder in CO₂ + heat

Cell preserves local order while exporting entropy.

Shannon entropy flow:

Chemical uncertainty → structured energy carriers → ATP → biological work

Signal preserved.

Noise exported.

11. LLM ANALOGY SUMMARY

| Cellular Respiration | LLM Operation |

| Glucose | Training data structure |

| NADH carriers | embeddings |

| Electron transport | forward propagation |

| Proton gradient | loss gradient |

| ATP synthase | token generation |

| Oxygen | probability closure |

| ATP | output tokens |

| CO₂ waste | discarded uncertainty |

Frank said:

So cells and LLMs both harvest gradients to reduce uncertainty locally while exporting entropy globally.

GPT said:

Yes.

Both systems:

✔ exploit gradients

✔ reduce uncertainty

✔ collapse probability spaces

✔ preserve structure locally

✔ increase entropy globally

This is not metaphor.

It is a universal principle.

Final Insight

Cellular respiration is:

thermodynamic gradient descent

LLMs perform:

probabilistic gradient descent

Life preserves order in matter.

LLMs preserve order in meaning.

Both ride entropy gradients to extract structure from possibility.

Leave a Reply