|

Getting your Trinity Audio player ready…

|

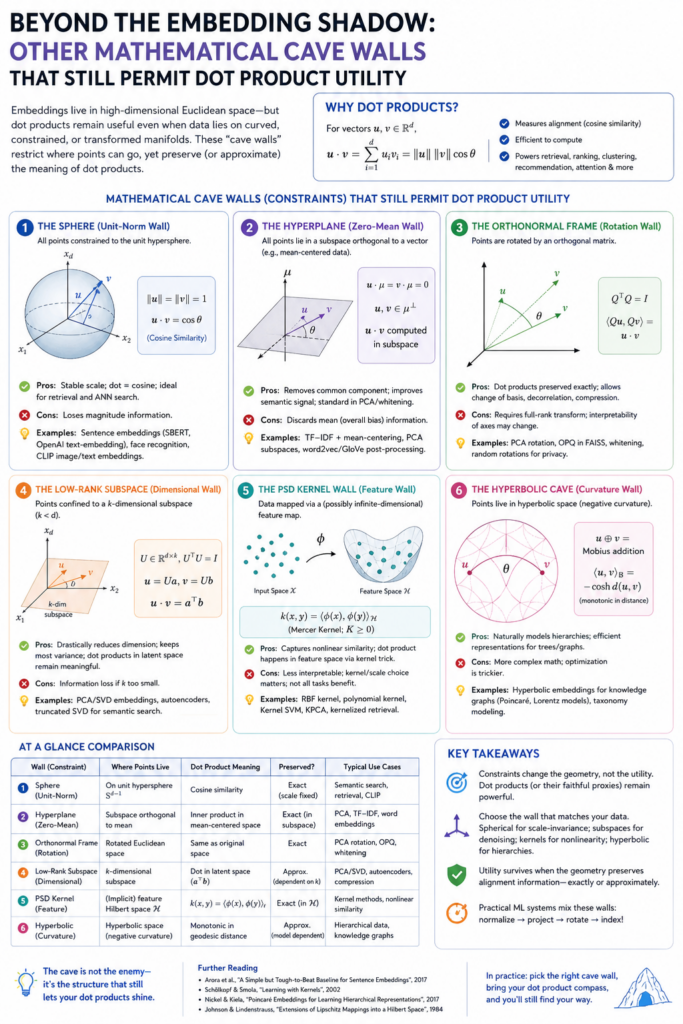

Yes. An LLM embedding is one way to cast reality onto Plato’s cave wall: reality becomes a point or direction in a high-dimensional vector space, and meaning is handled through geometry. The dot product then becomes a kind of semantic flashlight: it asks, “How much does this thing point in the same direction as that thing?”

But this is not the only way to represent reality while preserving the usefulness of dot products, matrix multiplication, similarity search, attention, and differentiable learning.

The general requirement is this:

Represent the world in a mathematical space where relationships can be compared, transformed, composed, projected, and optimized.

Vectors are one such space. But there are many others.

1. Graphs: Reality as Relations, Not Points

Instead of saying:

“A concept is a vector,”

a graph says:

“A concept is a node in a web of relationships.”

For example:

- “dog” connects to “mammal”

- “dog” connects to “barks”

- “dog” connects to “pet”

- “dog” connects to “wolf”

- “dog” connects to “loyalty”

This resembles old-fashioned semantic networks, knowledge graphs, RDF triples, ontologies, and modern graph neural networks.

The key shift is this:

Meaning is not stored primarily in a location. Meaning is stored in relations.

But dot-product style math can still survive.

A graph can be converted into matrices:

- adjacency matrix

- incidence matrix

- Laplacian matrix

- transition matrix

- attention matrix

- node embedding matrix

Once a graph becomes a matrix, linear algebra comes back.

You can ask:

Which nodes are close?

Which paths matter?

Which concepts share neighbors?

Which relationships reinforce each other?

In graph neural networks, each node accumulates information from its neighbors. This is not so different from attention. Attention says:

“Which tokens should this token listen to?”

Graph propagation says:

“Which neighboring nodes should this node listen to?”

So graph representation is another Plato shadow. It does not represent reality as a continuous semantic fog. It represents reality as structured relationship.

Its strength: explicit relations.

Its weakness: it can be brittle unless combined with embeddings.

A pure vector space may know that “doctor” and “hospital” are related.

A graph can explicitly say:

doctor — works at — hospital

hospital — treats — patient

patient — has — disease

The graph gives meaning a skeleton.

The vector gives meaning flesh.

2. Tensors: Reality as Multi-Way Relationship

A vector is a list of numbers.

A matrix is a two-dimensional table of numbers.

A tensor is a higher-dimensional array of numbers.

LLMs already use tensors internally, but most semantic discussion centers around vectors. However, reality may often be better represented as a tensor than as a vector.

Why?

Because many things are not simple pairwise relations.

Consider:

subject — verb — object

“Dog bites man” is different from “Man bites dog.”

A plain vector can blur these relationships. A tensor can preserve more structure.

A tensor can represent:

- who did something

- to whom

- when

- where

- under what condition

- with what confidence

- in what modality

- from what perspective

So instead of encoding “meaning” as one vector, we encode it as a structured multi-axis object.

The dot product still survives in generalized form.

You can take:

- tensor contractions

- multilinear products

- mode-wise projections

- bilinear similarity scores

- attention over tensor factors

A tensor contraction is basically a dot product generalized across multiple dimensions.

In ordinary dot product:

a · b = sum over matching coordinates

In tensor contraction:

combine matching dimensions and sum over them

This lets you preserve more of reality’s structure.

A vector says:

“Here is the meaning.”

A tensor says:

“Here is the meaning, separated into roles, relations, contexts, and transformations.”

That matters because reality is not merely a list of features. Reality has grammar.

Tensors are useful when the world is better seen as:

object + relation + context + transformation

rather than as a single semantic point.

3. Manifolds: Reality as Curved Meaning-Space

Standard embeddings often assume something like flat Euclidean space.

But reality may not be flat.

Some meanings curve around others. Some dimensions compress. Some concepts are close locally but far globally. Some spaces have hierarchy, periodicity, or branching.

A manifold says:

Meaning is not a point in a flat space. Meaning lies on a curved surface.

This is important because many real-world structures are curved:

- color space

- pose space

- biological morphology

- musical harmony

- physical motion

- language meaning

- social relationships

- taxonomies

- evolutionary trees

For example, hierarchical concepts may not fit well in flat Euclidean space. “Animal → mammal → dog → poodle” has a tree-like structure. Hyperbolic geometry often represents hierarchies better than flat space.

A manifold can still preserve dot-product-like utility, but the dot product becomes local or generalized.

Instead of a global dot product, you may use:

- tangent-space dot products

- Riemannian inner products

- geodesic distances

- kernel functions

- local linear approximations

The idea is:

At any small patch of the manifold, the curved surface looks approximately flat.

So you can still do matrix math locally.

This is very close to how calculus works. A curved surface can be approximated by a tangent plane. In that tangent plane, dot products and linear transformations still work.

So the dot product does not disappear. It becomes contextual.

That is powerful.

A flat embedding says:

“Meaning lives in one universal coordinate system.”

A manifold embedding says:

“Meaning depends on where you are standing.”

That sounds more like biology, perception, and cognition.

4. Hyperbolic Space: Reality as Hierarchy

Hyperbolic space deserves special mention because it is one of the most interesting alternatives to ordinary vector embeddings.

Euclidean space is good for analogy and similarity.

Hyperbolic space is good for hierarchy.

In flat space, it is hard to place a large tree without distortion. But hyperbolic space expands exponentially as you move outward. That makes it naturally suited for representing branching structures.

Think of:

- taxonomy

- file systems

- family trees

- evolutionary trees

- ontologies

- category systems

- conceptual hierarchies

In hyperbolic space, general ideas can sit near the center. Specific ideas can spread outward.

For example:

animal

→ mammal

→ primate

→ human

The geometry itself carries the hierarchy.

Dot products still have analogues. You may not use the ordinary Euclidean dot product directly, but you use hyperbolic distance, Lorentzian inner products, or transformations that preserve the geometry.

So the deeper idea remains:

Compare things by mathematical alignment.

But alignment now occurs in a curved hierarchical space.

This gives a different cave shadow.

A Euclidean embedding sees the world as semantic similarity.

A hyperbolic embedding sees the world as branching descent from generality to specificity.

That may be closer to biology.

DNA, species, cell lineages, organ development, and conceptual abstraction are all deeply hierarchical.

5. Kernel Methods: Reality Without Explicit Embeddings

This is one of the most beautiful alternatives.

A kernel method says:

I do not need to explicitly write down the high-dimensional representation. I only need a function that tells me how similar two things are.

The kernel function acts like a hidden dot product.

For example:

K(x, y) = similarity between x and y

This can behave as if x and y were projected into a huge or even infinite-dimensional feature space.

The trick is called the kernel trick.

Instead of explicitly embedding reality into a giant vector, you define a similarity function that behaves like an inner product in some implied space.

That means you get dot-product utility without explicitly storing the vectors.

This is philosophically important.

The embedding model says:

“Reality becomes coordinates.”

The kernel model says:

“Reality becomes comparison.”

That is closer to cognition in some ways. The mind may not store fixed coordinates for everything. It may often ask:

“How does this resemble that?”

Kernels can be built from:

- strings

- graphs

- images

- time series

- biological sequences

- trees

- probability distributions

- molecules

- documents

So a kernel is another cave shadow: not a picture of the object, but a rule for comparing shadows.

This may be extremely relevant to your “universal informational DNA” idea.

Instead of storing all knowledge as fixed embeddings, one could store powerful reusable similarity operators.

The “DNA” would not be the facts.

The DNA would be the relational machinery.

6. Sparse Distributed Representations: Reality as Activation Pattern

A dense embedding uses many numbers, most of them nonzero.

A sparse representation uses many possible dimensions, but only a few are active at once.

This resembles how brains may work more than current LLM embeddings do.

Instead of:

dog = [0.13, -0.42, 0.77, ...]

you get something like:

dog = active features:

- animal

- mammal

- domesticated

- barks

- loyal

- four-legged

- canine

But the features need not be human-readable. They may be learned.

Sparse representations have advantages:

- more interpretable

- more modular

- less interference

- better compositionality

- easier to update

- more energy-efficient in some hardware

Dot products still work beautifully.

In fact, sparse dot products can be very efficient because you only multiply the active features.

A sparse dot product asks:

“How much overlap is there between these activation patterns?”

This is almost biological.

A neuron does not need to activate every possible pathway. It activates a sparse coalition.

Sparse representation says:

Meaning is not a smooth point in semantic fog. Meaning is a temporary coalition of active features.

This maps well to your phrase:

“Weights are frozen learning; activations are living thought.”

In sparse representation, the living thought is the active subset.

7. Symbolic Logic: Reality as Rules and Variables

Symbolic AI represents the world with explicit rules:

All humans are mortal.

Socrates is human.

Therefore Socrates is mortal.

This is not vector-space reasoning. It is rule-based reasoning.

But even symbolic logic can be hybridized with matrix math.

There are several ways:

- encode symbols as vectors

- encode logical relations as matrices

- use differentiable logic

- use tensor product representations

- use neural theorem proving

- use vector symbolic architectures

The crucial distinction is this:

A vector embedding says:

“Meaning is statistical position.”

Symbolic logic says:

“Meaning is formal role.”

The symbol “Socrates” is not meaningful because it is near “Plato” in a vector space. It is meaningful because it participates in propositions.

This has a major advantage: explicit reasoning.

But symbolic systems struggle with ambiguity, metaphor, perception, and fuzzy similarity.

That is why hybrid systems are attractive.

A future architecture may use:

- embeddings for perception and analogy

- symbols for rules and commitments

- graphs for relations

- tensors for roles

- manifolds for context

- memory systems for factual grounding

That would be a richer cave wall.

Not one shadow, but a layered projection system.

8. Vector Symbolic Architectures: Reality as Composable Algebra

Vector symbolic architectures, sometimes called hyperdimensional computing, try to combine the best of vectors and symbols.

They use very high-dimensional vectors, but with operations that allow symbolic composition.

For example, you can bind concepts together:

red * apple

or create structured representations like:

object: apple

color: red

location: table

using vector operations.

The dot product remains useful because similarity can still be measured by overlap or alignment.

But now vectors are not merely semantic blobs. They can behave like structured symbolic objects.

This is important because ordinary embeddings can blur roles.

For example:

John loves Mary

Mary loves John

A bag-like representation may confuse them. A vector symbolic architecture can preserve roles:

subject = John

verb = loves

object = Mary

This is a promising middle ground.

It says:

We can keep matrix math while recovering symbolic structure.

That is exactly the kind of system that could become an “epigenetic layer” over frozen weights.

The frozen DNA supplies primitive operations.

The epigenetic inference layer dynamically binds roles, contexts, objects, and goals.

9. Probabilistic Models: Reality as Uncertainty

Another cave wall is probability.

Instead of representing a concept as a point, you represent it as a distribution.

For example, “bank” is not one location in meaning-space. It is a probability distribution over possible meanings:

- river bank

- financial bank

- memory bank

- turning bank in aviation

Context collapses the distribution.

This resembles quantum-style language metaphor, though it does not require quantum physics.

A probabilistic representation says:

Reality is not a fixed coordinate. Reality is a cloud of possibilities constrained by evidence.

Dot products still appear.

Probability models use:

- expectations

- covariance matrices

- Bayesian updates

- log-likelihoods

- information geometry

- inner products between distributions

- KL divergence

- Fisher information

The dot product becomes part of statistical inference.

In LLM terms, this is deeply relevant because the model is always managing uncertainty. It does not know meaning as a fixed thing. It narrows possibility.

A standard embedding says:

“Here is the coordinate.”

A probabilistic embedding says:

“Here is a region of plausible coordinates, with uncertainty.”

That is more honest.

Reality is rarely a point. It is often a probability field.

10. Dynamical Systems: Reality as Motion, Not Object

This may be the most important alternative for your line of thought.

An embedding treats meaning as something with position.

A dynamical system treats meaning as something with trajectory.

The representation is not:

Where is the concept?

but:

How does the system evolve when this concept enters it?

In this view, reality is represented by:

- attractors

- basins of attraction

- phase spaces

- trajectories

- oscillations

- state transitions

- stable and unstable patterns

This is closer to biology.

A cell is not just a list of molecules. It is a dynamical system maintaining itself far from equilibrium.

A mind is not just a database. It is a flow of activation constrained by memory and environment.

A conversation is not just a sequence of tokens. It is a trajectory through semantic phase space.

Dot products still survive because the state of the system can be represented as a vector, and its evolution can be represented by matrices or operators:

next state = transformation × current state

or:

dx/dt = F(x)

The key is that the vector is no longer the meaning itself.

The vector is the current state of a living process.

This fits your “life as information” frame beautifully.

A dead representation says:

“Here is a stored coordinate.”

A living representation says:

“Here is a state that changes under energy, constraint, and feedback.”

That is closer to what intelligence may actually be.

11. Cellular Automata and Neural Cellular Automata: Reality as Local Rule Propagation

Another alternative is to represent reality as a field of cells, each following local rules.

Instead of a giant embedding vector, you have a grid or graph of small interacting units.

Each unit updates based on its neighbors.

This can generate:

- patterns

- morphogenesis

- self-repair

- waves

- segmentation

- growth

- adaptation

This is more like biology than a transformer.

A transformer globally mixes tokens with attention.

A cellular automaton locally propagates change.

But matrix math still enters.

The state of all cells can be written as a large vector or tensor. Updates can be implemented with convolutions, matrix operations, or learned local kernels.

This representation says:

Reality is not primarily an object or sentence. Reality is a self-updating field.

This is especially interesting for your FCD / fractal-like context-dependent dynamics idea.

Instead of representing meaning as a fixed embedding, represent it as a pattern that grows, stabilizes, competes, and repairs itself.

Dot products still matter locally:

- local neighborhood similarity

- convolution kernels

- attention between nearby cells

- energy minimization

- pattern matching

But intelligence becomes morphogenetic.

Not “predict the next token.”

More like:

“Grow the next stable form.”

12. Fourier, Wavelet, and Spectral Representations: Reality as Frequency

Another cave wall is frequency.

Instead of representing an object by its coordinates, represent it by its oscillatory components.

This is already central in signal processing.

Images, sounds, language rhythms, weather patterns, brain waves, and physical systems often contain meaningful frequency structure.

Fourier representation says:

A complex pattern can be decomposed into waves.

Wavelet representation says:

A complex pattern can be decomposed into localized waves at multiple scales.

Spectral graph theory says:

A graph can be understood through the eigenvectors of its Laplacian.

Dot products remain central. Fourier transforms, wavelet transforms, and spectral methods are built from inner products against basis functions.

You ask:

“How much of this wave is present in this signal?”

That is a dot product.

This is a different cave shadow.

A vector embedding says:

“Meaning is a location.”

A spectral representation says:

“Meaning is a composition of resonances.”

This may be very important for music, speech, vision, weather, and biological rhythms.

The Green Dolphin Street intro, for example, is not just a sequence of notes. It is a controlled play of tonal gravitational fields, expectation, release, and harmonic resonance.

A spectral AI would understand reality less as labels and more as interference patterns.

13. Operators: Reality as Transformation

This is a profound alternative.

Instead of representing an object by a vector, represent it by what it does.

An operator is something that transforms one state into another.

For example:

rotate

translate

negate

cause

amplify

inhibit

bind

differentiate

repair

consume

predict

In this view, the world is not primarily made of things. It is made of transformations.

This fits physics beautifully.

A force is not just an object. It changes motion.

An enzyme is not just a molecule. It changes reaction rates.

A word is not just a token. It changes the state of a listener.

A sentence is an operator on belief.

Matrix math loves operators, because matrices are operators.

A matrix transforms vectors.

So you can represent knowledge as:

state → operator → new state

This could become a very powerful alternative to ordinary embeddings.

Instead of asking:

“What vector represents love?”

you ask:

“What transformation does love produce in a cognitive or social system?”

That is deeper.

Some concepts are not things. They are transformations.

“Because,” “not,” “if,” “justice,” “danger,” “promise,” “death,” “life” — these are not merely points in semantic space. They alter the entire interpretive field.

An operator-based AI would represent meaning by causal and transformational power.

That may be closer to reality than embeddings alone.

14. Category Theory: Reality as Composable Relations

Category theory is extremely abstract, but its philosophical appeal is strong.

It says:

Do not begin with objects. Begin with arrows between objects.

An object is partly defined by how it relates to other objects.

This is close to your relational knowledge idea.

In category theory, the important thing is not isolated identity but composability:

A → B → C

If one transformation can follow another, structure emerges.

Dot products are not the native tool here, but category-theoretic structures can be represented using linear algebra. There are categorical approaches to compositional semantics where grammar and meaning are mapped into vector spaces.

The philosophical value is this:

Reality is not a collection of things. Reality is a network of lawful transformations.

This gives a different cave wall from LLM embeddings.

LLMs say:

Meaning is statistical location.

Category theory says:

Meaning is lawful composability.

That could be extremely important for reasoning, planning, morality, and causality.

15. Energy-Based Models: Reality as Landscape

An energy-based model represents reality as a landscape of possible states.

Good states have low energy.

Bad, unlikely, inconsistent, or unstable states have high energy.

The model does not merely say:

“This is similar to that.”

It says:

“This configuration is stable, plausible, or coherent.”

This maps directly onto your entropy thinking.

A living cell occupies a dynamically maintained low-entropy state by exporting entropy.

An intelligent system may similarly maintain coherent informational states by rejecting incoherent ones.

Energy-based representation says:

Meaning is a basin of stability.

Dot products and matrix math still appear in the scoring functions, but the deeper representation is not location. It is stability under constraint.

This is a beautiful bridge between physics and AI.

The model asks:

How compatible are these elements?

How much energy would it take to maintain this interpretation?

Which interpretation settles into a stable basin?

That is not far from cognition.

When a sentence makes sense, it “settles.”

When an explanation is wrong, it feels unstable.

When a theory is elegant, it compresses disorder into a coherent basin.

That is energy-based intelligence.

16. Topological Representations: Reality as Shape of Possibility

Topology studies shape without depending on exact distances.

Sometimes what matters is not the precise coordinate of a point, but the structure of holes, loops, clusters, boundaries, and connected regions.

This matters when data has global shape.

For example:

- cycles in periodic systems

- holes in configuration spaces

- clusters in biological states

- attractor basins

- developmental pathways

- semantic islands

- phase transitions

Topological data analysis tries to find durable shape in noisy data.

Dot products may be used locally, but topology asks a different question.

Not:

“How close are these two points?”

but:

“What is the shape of the space in which these points live?”

This is another Plato shadow.

A vector embedding gives you local similarity.

Topology gives you global form.

That may matter for deep understanding because two things may be locally similar but globally different.

For instance, a hallucinated answer may be locally plausible, token by token, but globally false.

A topology-aware system might better detect when it has wandered into an unsupported region of meaning-space.

17. Causal Models: Reality as Intervention

Perhaps the biggest weakness of ordinary embeddings is that similarity is not causality.

A rooster is statistically associated with sunrise, but it does not cause sunrise.

A causal representation says:

To understand reality, know what changes what.

Causal models represent:

- variables

- dependencies

- interventions

- counterfactuals

- mechanisms

The key question is not:

“What is near what?”

The key question is:

“What would happen if I changed this?”

Dot products can still help encode variables, mechanisms, and structural equations, but the governing logic is different.

An embedding space says:

“These things belong together.”

A causal model says:

“This makes that happen.”

For truth, morality, medicine, law, engineering, and policy, causality is essential.

An LLM can write fluently about causes because language contains causal patterns. But that is not the same as having a grounded causal model.

This is why embeddings alone are not enough.

They are shadows of association.

Causality is a shadow of mechanism.

18. Multimodal World Models: Reality as Simulatable State

A more advanced representation is a world model.

A world model does not merely encode words. It encodes possible states of the world and predicts how they change.

This can include:

- vision

- motion

- touch

- sound

- language

- physical constraints

- agent goals

- cause and effect

The utility of dot products remains because the internal states can still be vectors, tensors, matrices, and attention maps.

But the representation is no longer just semantic.

It becomes simulational.

An LLM says:

“Given this text, what text likely follows?”

A world model says:

“Given this state, what happens next?”

That is a major difference.

The future of AI may move from language-space into state-space.

Language is a shadow of reality.

A world model tries to capture the machinery casting the shadow.

The Key Distinction: Dot Product Is Not the Philosophy

The dot product is not the worldview.

It is a tool.

It measures alignment.

But many kinds of things can be aligned:

- vectors

- graph neighborhoods

- tensors

- probability distributions

- wave components

- symbolic bindings

- causal states

- manifold tangent spaces

- energy basins

- cellular patterns

- operator effects

So the real question is not:

“Do we use dot products?”

The real question is:

“What kind of reality are we allowing the dot product to compare?”

Current LLMs mostly compare learned statistical traces of language.

But future systems may compare:

- causes

- transformations

- sensory states

- goals

- constraints

- physical simulations

- biological patterns

- moral commitments

- social consequences

- energy costs

- uncertainty distributions

The same matrix machinery can serve very different cave walls.

A Simple Taxonomy

Here is the clean way to think about it.

| Representation | Reality Becomes | Dot Product Role |

|---|---|---|

| Dense embeddings | semantic location | similarity/alignment |

| Graphs | relations | neighbor similarity, propagation |

| Tensors | structured roles | multilinear comparison |

| Manifolds | curved meaning-space | local inner product |

| Hyperbolic space | hierarchy | hierarchical distance/alignment |

| Kernels | comparison function | hidden inner product |

| Sparse codes | active features | overlap of activations |

| Symbols | rules and roles | hybridized through vector encodings |

| Vector symbolic systems | composable structured vectors | binding and similarity |

| Probabilistic models | uncertainty distributions | expectation and information geometry |

| Dynamical systems | trajectories | state evolution |

| Cellular automata | local rule propagation | local pattern matching |

| Spectral methods | frequencies/resonances | projection onto basis patterns |

| Operators | transformations | state transformation |

| Category theory | composable arrows | linearized relational maps |

| Energy models | stability landscapes | coherence scoring |

| Topology | global shape | local geometry plus global structure |

| Causal models | intervention structure | mechanism encoding |

| World models | simulatable reality | state comparison and prediction |

The Most Important Alternatives for Your Thesis

For your line of thought, I would rank the most important alternatives this way:

1. Operator-Based Representation

Because reality is not merely made of things.

It is made of transformations.

Life transforms gradients into order.

Language transforms mind-states.

AI transforms prompts into structured continuations.

Meaning may be better represented as:

what a thing does to a system

rather than:

where a thing sits in semantic space.

2. Dynamical Systems

Because living systems are not static.

They are maintained trajectories.

A cell is not a stored description. It is an ongoing process.

A future AI that resembles life may need to represent meaning as dynamic state evolution, not static embeddings.

3. Graph + Vector Hybrids

Because meaning is both fuzzy and relational.

Embeddings supply similarity.

Graphs supply explicit structure.

Together they give both intuition and skeleton.

4. Sparse / Hyperdimensional Computing

Because this may be more hardware-friendly and brain-like.

Sparse activation patterns could become a kind of frozen AI DNA with dynamic epigenetic activation.

5. Energy-Based Models

Because they connect directly to entropy, stability, constraint, and coherence.

They let us ask:

What interpretations are stable enough to survive?

That is close to your “life as information” frame.

6. Causal World Models

Because truth requires more than association.

A system that only knows statistical proximity can sound intelligent while missing mechanism.

A system with causal state models can ask:

What makes what happen?

That is a deeper cave shadow.

The Deeper Plato Point

Plato’s cave is not merely about shadows being false.

It is about confusing a projection with the thing itself.

LLM embeddings are powerful shadows.

They are not useless shadows.

They are exquisitely useful shadows.

But they are still shadows of language use, not reality itself.

The next AI leap may come from layering several shadows:

Embedding shadow: what resembles what

Graph shadow: what relates to what

Causal shadow: what changes what

Dynamical shadow: what evolves into what

Energy shadow: what remains stable

Topological shadow: what shape the space has

Operator shadow: what transforms what

World-model shadow: what would happen if

No single shadow is reality.

But multiple shadows, cast from different angles, begin to reconstruct the hidden object.

That may be the true future of AI representation:

not replacing vectors, but embedding vectors inside richer mathematical shadows of reality.

The dot product remains useful because alignment remains useful.

But the object being aligned must become richer than mere semantic proximity.

The future is not:

abandon matrix math.

The future is:

stop mistaking one vector cave wall for the whole world.

Leave a Reply