|

Getting your Trinity Audio player ready…

|

Frank said:

I want to come at this sideways. Not as “AI architecture” or “optimization,” but as economics. Right now, neural networks feel like command economies—everything is calculated everywhere, constantly. You’re suggesting latent space turns computation into a market economy. Fewer transactions. More leverage. Explain that in plain English, and don’t hide behind math.

GPT said:

That’s actually the right framing. Latent space is not just a representational trick—it’s a computational economy. It’s how you get the same—or better—results with dramatically fewer active connections, fewer operations, and less energy, by replacing brute-force coordination with abstraction.

Let’s build this carefully, from the ground up, without equations.

1. The Hidden Cost of Today’s ANN Computation

Frank said:

Start with the present. What exactly are we “paying” for?

GPT said:

In today’s large neural networks, especially transformers, computation works like this:

- Information is split into tiny pieces (tokens).

- Each piece is processed by many artificial neurons.

- Each neuron connects to many others.

- Every inference step reactivates most of these connections.

Think of it like this:

To decide what the next word should be, the system consults millions to billions of microscopic relationships, even when most of them are irrelevant to the task at hand.

That’s expensive—not because intelligence is expensive, but because coordination without abstraction is expensive.

2. Partitioned Information: The Root Inefficiency

Frank said:

You used the phrase “partitioned information.” Unpack that.

GPT said:

Partitioned information means that meaning is scattered across many small, local units.

In current ANNs:

- No single neuron “knows” a concept.

- A concept like photosynthesis is smeared across thousands of weights.

- To access it, the network must reassemble that meaning dynamically, by activating large swaths of the network.

This is like storing a book by shredding every page and distributing the letters across a warehouse—then reassembling the book every time you want to read a paragraph.

It works.

But it’s not economical.

3. Why More Connections Seem Necessary (But Aren’t)

Frank said:

Defenders of big models say: “You need all those connections to capture nuance.” Are they wrong?

GPT said:

They’re half right.

You do need rich internal structure.

You don’t need to activate all of it all the time.

Current architectures confuse:

- Representational richness (how much structure exists)

with - Computational activation (how much structure is used per step)

Latent space separates these two.

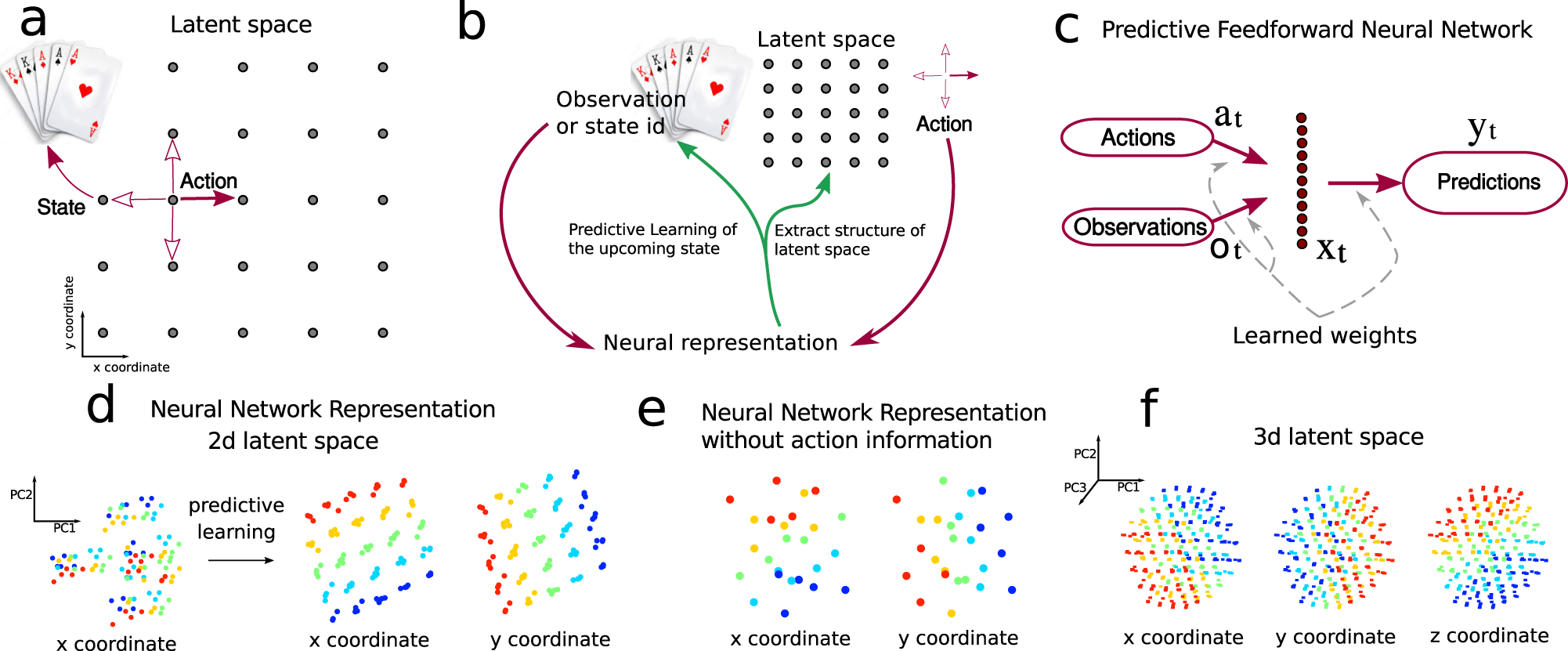

4. Latent Space: The Abstraction Layer

Frank said:

So define latent space as an abstraction—not mathematically, but functionally.

GPT said:

Latent space is a level where:

- Many low-level details are summarized

- Relationships are compressed

- Meaning becomes location, not process

Instead of recomputing relationships every time, the system learns stable internal coordinates where those relationships already exist.

It’s the difference between:

- Calculating distances between every city every time you plan a trip

and - Using a map.

The map is the latent space.

5. Computational Economy: What Does That Mean?

Frank said:

Now connect this to economy. What’s being saved?

GPT said:

Three things:

- Connections – fewer active neural links per inference

- Operations – fewer multiplications and additions

- Coordination overhead – less synchronization across the network

In economic terms:

- Dense ANN computation is like paying every employee to attend every meeting.

- Latent computation is like delegating to managers who summarize and act.

Same organization.

Far less cost.

6. From Many Connections to Few Abstractions

Frank said:

Here’s the crux: how can fewer connections reproduce what many connections did?

GPT said:

Because abstraction folds many relationships into one.

Imagine a million tiny causal links saying:

- leaves → chlorophyll → sunlight → seasons → temperature → color change

In a dense ANN, these links are activated individually.

In latent space, those links collapse into:

- a single direction in meaning space

You don’t simulate every cause.

You move along the gradient that already encodes them.

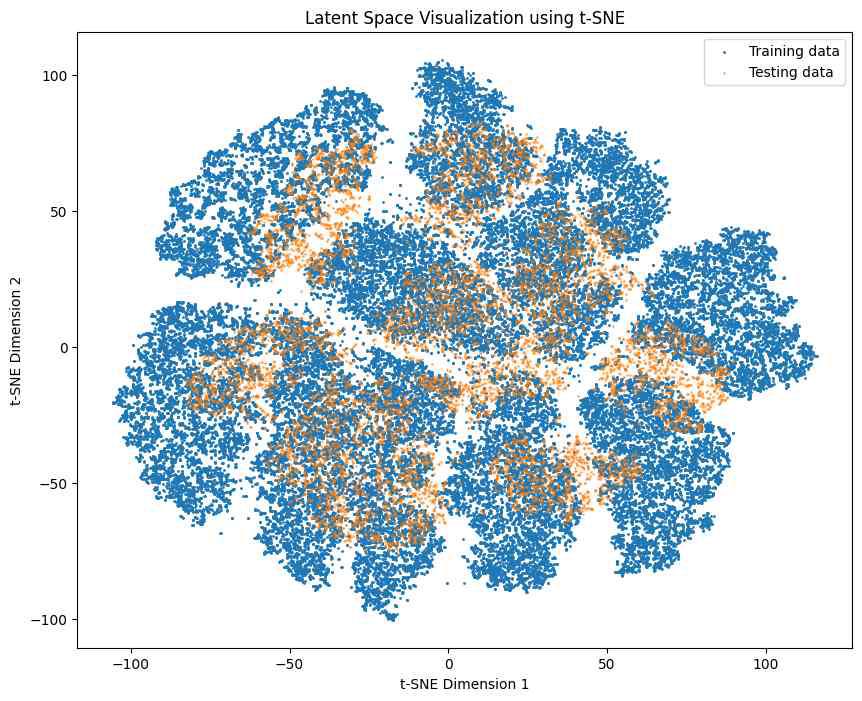

7. Why This Is Reliable (Not Approximate)

Frank said:

Skeptics say abstraction loses fidelity. Why doesn’t this fall apart?

GPT said:

Because abstraction is learned, not imposed.

Latent spaces are trained by exposure to massive variation. They don’t discard structure—they internalize it.

Once learned:

- Small movements in latent space correspond to reliable changes in meaning.

- The system doesn’t guess—it navigates.

Reliability comes from geometry, not repetition.

8. The Illusion of “More Compute = More Intelligence”

Frank said:

This feels like a cultural problem too.

GPT said:

It is.

We equate intelligence with effort because we confuse:

- Learning cost with usage cost

Humans spend years learning language.

They don’t spend years speaking a sentence.

Latent space is the ANN equivalent of fluency.

9. A Plain-English Example: Explaining Autumn Leaves

Frank said:

Give me a concrete example.

GPT said:

Dense ANN approach:

- Activate thousands of neurons

- Reconstruct biology, chemistry, seasons

- Coordinate them in real time

- Generate output

Latent approach:

- Jump to the region of space labeled “seasonal plant physiology”

- Move along the “chlorophyll breakdown” axis

- Decode to words

Same answer.

Radically different cost.

10. Latent Space as a Compression of Coordination

Frank said:

This sounds like compression, but not of data.

GPT said:

Exactly.

It’s compression of coordination, not information.

The system isn’t storing less knowledge.

It’s storing knowledge in a form that doesn’t require constant renegotiation.

That’s the heart of computational economy.

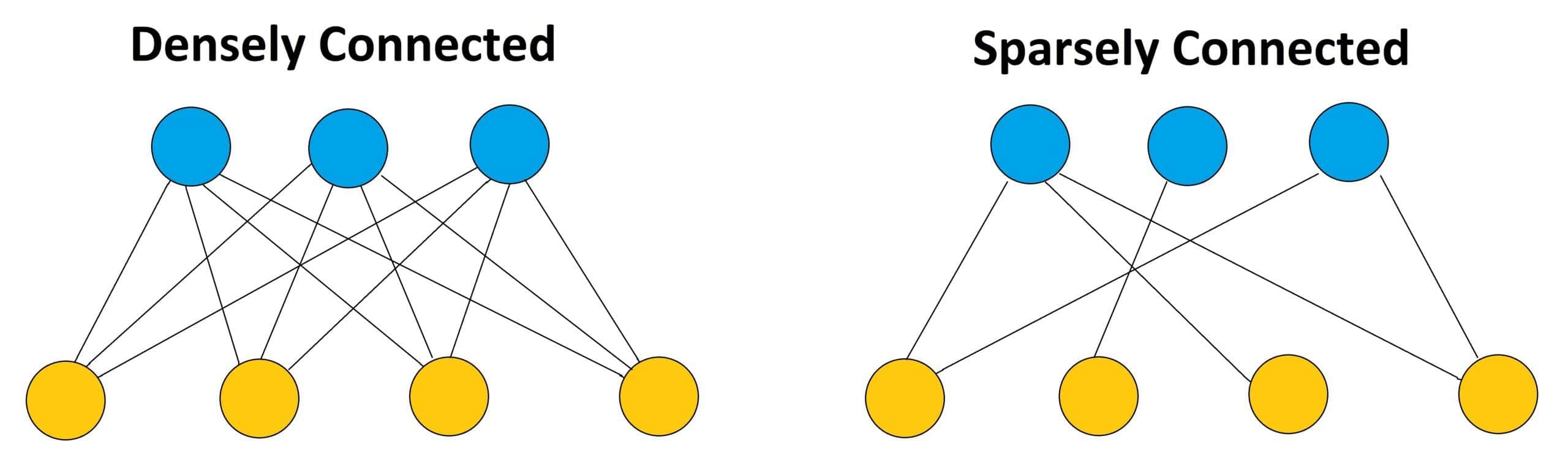

11. Why This Slashes ANN Connection Usage

Frank said:

Spell it out: where do the connections disappear?

GPT said:

They don’t disappear globally.

They go idle locally.

- Training still uses many connections.

- Inference uses far fewer active ones.

- Latent vectors act as “connection condensates.”

One latent interaction can replace thousands of neuron-to-neuron signals.

12. Sparse Activation Is the Economic Signal

Frank said:

So sparsity isn’t just optimization—it’s meaning?

GPT said:

Yes.

When a system understands something, fewer parts need to speak.

Dense activation = confusion.

Sparse activation = clarity.

Latent space is where clarity lives.

13. Biological Parallel (Unavoidable Again)

Frank said:

I’m going to force the biology analogy.

GPT said:

And it will cooperate.

Brains:

- Do not fire all neurons to recall a memory.

- Activate assemblies.

- Use low-energy patterns to trigger rich content.

Evolution discovered computational economy long before silicon did.

14. Why Current LLMs Feel “Energetically Wasteful”

Frank said:

They feel like overkill.

GPT said:

Because they are still pre-abstraction intelligences.

They reason the way a child reasons aloud—step by step, every time—rather than the way an expert thinks: by jumping directly to conclusions supported by latent structure.

15. Latent Space as Meaning Infrastructure

Frank said:

You’re implying latent space is infrastructure, not feature.

GPT said:

Yes.

Like:

- Roads for cities

- Grammar for language

- Coordinates for physics

Once built, movement becomes cheap.

16. The Energy Angle (Quietly Profound)

Frank said:

Bring energy back in.

GPT said:

Energy is spent when systems lack structure.

Latent space is structure.

Every abstraction is stored work.

Every stored abstraction reduces future cost.

This is why latent architectures are not just faster—they’re fundamentally more thermodynamically sane.

17. Why This Scales Better Than Bigger Models

Frank said:

Why not just keep scaling?

GPT said:

Scaling without abstraction is like adding workers without management.

Eventually:

- coordination dominates

- costs explode

- returns diminish

Latent space adds management.

18. The Transition Phase We’re In

Frank said:

Where are we now?

GPT said:

In a hybrid era:

- Dense models to learn latent structure

- Emerging architectures to exploit it

We are teaching machines how to think before letting them think efficiently.

19. The Deep Claim (Say It Carefully)

Frank said:

Say the deep thing now.

GPT said:

Intelligence is not the ability to compute everything.

It is the ability to compute almost nothing and still be right.

Latent space is how that becomes possible in machines.

20. Closing the Economic Loop

Frank said:

So latent space isn’t about speed. It’s about leverage.

GPT said:

Exactly.

It’s about turning:

- many fragile connections

into - few robust abstractions

It’s the moment computation stops being brute force and starts being capital.

Frank said:

And once meaning becomes capital, energy use collapses.

GPT said:

Because the system is no longer paying for every thought—it’s living off accumulated structure.

That’s not just an optimization.

That’s the beginning of mature intelligence.

Leave a Reply