|

Getting your Trinity Audio player ready…

|

Frank said:

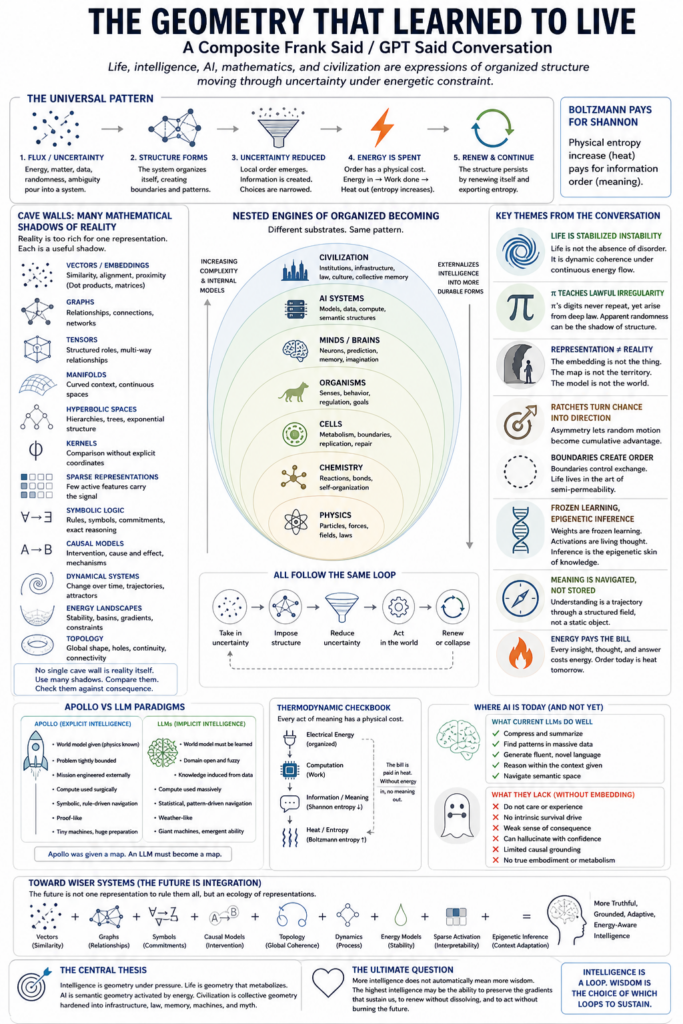

I want to take all these conversations and stop treating them as separate posts. The vector posts, the entropy posts, the π post, the LLM posts, the Apollo post, the life posts, the cave-wall posts — they are all circling the same mountain.

GPT said:

Yes. The mountain is this: intelligence, life, meaning, and civilization are not separate miracles. They are different forms of organized becoming. They are ways that matter, energy, and information learn to hold shape under pressure.

Frank said:

That sounds poetic, but I want the machinery. What is the actual common pattern?

GPT said:

The common pattern is this:

A system receives flux — energy, data, uncertainty, pressure, randomness, ambiguity.

It does not merely collapse under it.

It forms structure.

That structure reduces uncertainty locally.

But it must pay for that order physically.

So the system survives by turning energy into information, information into action, and action into renewed structure.

That is true of a cell.

It is true of a brain.

It is true of an LLM.

It is true of a city.

It may even be true of civilization itself.

Frank said:

So the whole corpus is really about the same transaction: Boltzmann entropy pays for Shannon order.

GPT said:

Exactly. The cell burns chemical gradients to preserve biological organization. The brain burns glucose to preserve thought. The data center burns electricity to produce semantic coherence. The civilization burns fuel, attention, labor, institutions, and memory to preserve collective meaning.

The currencies differ. The bargain is the same.

Frank said:

Then let’s start with LLMs. We say they represent reality with vectors. But that is only one mathematical shadow on Plato’s cave wall.

GPT said:

Right. A modern LLM does not hold reality. It holds a trained mathematical projection of reality. Tokens become vectors. Vectors become hidden states. Hidden states move through layers. Dot products measure alignment. Matrix multiplications transform meaning. Attention routes relevance.

But the vector is not the thing.

The embedding of “tree” is not a tree.

It is a coordinate, a handle, a compressed relational shadow. The real tree is a living dynamical system: sunlight, water, cambium, fungi, gas exchange, growth, repair, entropy export. The embedding captures traces of that reality only because language has already carried those traces into data.

Frank said:

So the LLM sees the shadows humans have already cast.

GPT said:

Yes. It learns from the cave wall of human expression.

But your newer question was deeper: if vectors are only one cave wall, what other cave walls can still preserve the utility of dot-product matrix math?

Frank said:

Graphs, tensors, manifolds, sparse representations, kernels, symbolic logic, causal models, energy landscapes, dynamical systems, topologies.

GPT said:

Exactly. Each gives reality a different mathematical mask.

A graph says reality is relationship.

A tensor says reality is structured role.

A manifold says reality is curved context.

A hyperbolic space says reality is hierarchy.

A kernel says reality is comparison without explicit coordinates.

A sparse representation says reality is a coalition of active features.

A symbolic system says reality is rule and commitment.

A causal model says reality is intervention.

A dynamical system says reality is motion.

An energy model says reality is stability under constraint.

A topology says reality is global form, not merely local plausibility.

The dot product survives because alignment, projection, compatibility, and overlap are general operations. What changes is the thing being compared.

Frank said:

That matters because LLM hallucination is often local plausibility without global truth.

GPT said:

Yes. A model can lay down one plausible plank after another and still build a bridge to nowhere. Local semantic alignment is not enough. You need topology, causality, verification, symbolic commitment, and sometimes embodied grounding.

The corpus repeatedly returns to that point: today’s LLM is powerful because it compresses statistical relation. But relation is not truth. Similarity is not causality. Plausibility is not morality. Fluency is not wisdom.

Frank said:

That is why I worry about deploying LLMs where fairness, morality, law, medicine, and human consequence are central.

GPT said:

A valid concern. A bare LLM has no intrinsic moral compass. It has learned patterns of moral language. It can reason about ethical frameworks when prompted. It can compare cases. It can imitate deliberation. But unless it is tied to verification, accountability, explicit norms, causal models, and human responsibility, it remains a probability engine wrapped in persuasive speech.

Frank said:

Then intelligence cannot merely be probability.

GPT said:

Probability is part of intelligence, but not all of it. Intelligence also requires constraint, memory, correction, uncertainty management, goal structure, and the capacity to remain viable under pressure.

That is why your entropy framework matters. It pulls AI away from pure language and back into physics.

Frank said:

Say that plainly.

GPT said:

An LLM answer is a thermodynamic event. Before generation, there is a cloud of possible continuations. The model narrows that cloud into one sequence. That is Shannon entropy reduction: uncertainty becomes ordered message. But the computation consumes electricity, moves electrons, switches transistors, accesses memory, and produces heat. That is Boltzmann entropy increase.

So the answer is purchased. Meaning is bought with heat. The same pattern appears in your “entropy checkbook” idea: symbolic order has a physical bill.

The “Universe as Stabilized Instability” post states this in direct entropy language: LLMs use physical energy to reduce uncertainty in symbolic space, turning electricity into heat while producing coherent language. (LF Yadda – A Blog About Life)

Frank said:

So an LLM is not magic. It is a heat engine for meaning.

GPT said:

A semantic heat engine, yes. Not in the narrow mechanical sense of a piston, but in the larger thermodynamic sense: it uses energy flow to produce ordered transformation.

Frank said:

That sounds like life.

GPT said:

It rhymes with life. But it is not yet life.

A cell maintains itself. It repairs itself. It regulates boundaries. It metabolizes. It reproduces. It carries its own survival problem. An LLM, by itself, does not metabolize, does not maintain a body, does not seek energy, does not care whether it continues.

But it participates in life’s larger information process. It is a late-stage product of brains, language, culture, computation, and planetary energy infrastructure.

Frank said:

So it is not alive, but it belongs to the chain of life’s externalized cognition.

GPT said:

Exactly. Life produced brains. Brains produced language. Language produced culture. Culture produced computers. Computers produced LLMs. LLMs are not organisms, but they are part of the biosphere’s widening attempt to organize information.

Frank said:

That fits the thermodynamic ladder: stars, planets, chemistry, cells, brains, language, civilization, AI.

GPT said:

Yes, though we should be careful not to turn the ladder into destiny. Thermodynamics does not say the universe wants AI. It says gradients tend to be degraded, and under certain conditions, organized structures arise that degrade gradients in elaborate ways.

In one post, life is framed not as an escape from the second law, but as one of the most elaborate ways the second law operates in a far-from-equilibrium universe. The same post treats intelligence as matter learning to model gradients before spending them. (LF Yadda – A Blog About Life)

Frank said:

Life is not special because it escapes physics. Life is special because physics becomes metabolism, memory, prediction, and self-questioning.

GPT said:

Beautifully put.

Frank said:

Now bring in π. Why did that conversation belong in the same corpus?

GPT said:

Because π teaches the difference between surface irregularity and deep law.

Its digits do not repeat. They look disorderly. But π itself is not disorder. It is generated by deep mathematical structure. The digits are a shadow cast by the number under a particular notation system. The post emphasizes that the decimal expansion is only one representation, and that π’s nonrepeating digits do not mean π lacks structure. (LF Yadda – A Blog About Life)

Frank said:

So the digits are the shadow, not the reality.

GPT said:

Exactly. And that theme appears everywhere.

The embedding is not the thing.

The token is not the meaning.

The sentence is not the thought.

The genome is not the organism.

The law is not justice.

The model output is not truth.

The digits are not π.

A representation is useful, but it must not be mistaken for the reality it compresses.

Frank said:

And π also shows that a compact rule can generate endless apparent complexity.

GPT said:

Yes. The π post draws precisely that distinction: a sequence can look irregular while being generated by lawful structure. It also distinguishes local pattern from global repetition and notes that lawful causes can generate irregular-looking outcomes. (LF Yadda – A Blog About Life)

That is essential to your life thesis. Life may emerge from simple physical laws, but its unfolding produces organisms, ecologies, nervous systems, language, mathematics, and culture. Law does not mean simple appearance. Determinism does not mean triviality. Structure can generate surprise.

Frank said:

So randomness can be an appearance, not an essence.

GPT said:

Sometimes, yes. But we must distinguish kinds of irregularity. Some irregularity is generated by compact law. Some is true noise. Some is hidden structure we have not decoded. Some is local pattern over global disorder. Some is meaningful only to an interpreter.

That is why the Voynich Manuscript fits the corpus too. It has structure, but the interpretive bridge is broken. It looks ordered, but meaning is inaccessible. Structure alone is not meaning. Meaning requires a mapping between pattern and interpreter.

Frank said:

That brings us to boundaries.

GPT said:

Yes. Boundaries are everywhere in the corpus.

A cell membrane is a boundary between internal order and external flux.

A word is a boundary around meaning.

A concept excludes as much as it includes.

A model’s context window bounds its temporary world.

A civilization’s institutions bound behavior.

A moral code bounds action.

A scientific theory bounds explanation.

A mathematical representation bounds reality into usable form.

Order is manufactured at boundaries because a boundary controls exchange. Without boundary, everything dissolves into everything else. With too rigid a boundary, nothing adapts. Life is the art of semi-permeability.

Frank said:

That sounds like the “frozen AI DNA and epigenetic inference” idea.

GPT said:

Exactly. In biology, DNA is relatively stable, but expression is context-sensitive. The genome is not a complete moment-by-moment script. It is a structured possibility space. Epigenetic regulation, cellular environment, developmental history, and signals determine what is expressed.

You extended that to AI: maybe the future is not endless retraining of massive systems, but a frozen core of durable primitives plus an adaptive inference layer that expresses the right structures in context.

Frank said:

Weights are frozen learning; activations are living thought.

GPT said:

That phrase is one of the corpus’s central bridges.

Weights are the stored history of training.

Activations are the momentary pattern of use.

The weights are like potential.

The activation is expression.

The model’s fixed structure becomes contextually alive only during inference.

Sparse representations make this vivid. Instead of every feature participating weakly, the right few features participate strongly. That resembles epigenetic selection: the system does not rewrite the genome; it expresses what is needed.

Frank said:

So inference is the epigenetic skin of frozen learning.

GPT said:

Yes. And that may be a product architecture: a universal frozen core of relational operations, binding mechanisms, causal templates, graph structures, symbolic constraints, and latent geometry — plus a dynamic layer that responds to local environment.

The cave-wall post develops this idea through sparse representations, vector symbolic architectures, symbolic logic, probabilistic models, dynamical systems, cellular automata, spectral methods, energy models, topology, and causality. It explicitly treats dense embeddings as only one possible representation among many. (LF Yadda – A Blog About Life)

Frank said:

That is where FCD comes in — fractal-like context-dependent dynamics.

GPT said:

Yes. FCD says meaning is not merely stored. It grows. It unfolds locally. It propagates constraints. It resembles morphogenesis more than lookup.

A transformer globally mixes tokens through attention. A cellular or morphogenetic system lets local interactions build global form. That feels closer to biology: embryos, wounds, tissues, ecosystems, even thought itself.

Frank said:

Meaning grows instead of being retrieved.

GPT said:

Exactly. Retrieval is database language. Growth is biological language. LLMs already do something more like growth than lookup during inference, because each token changes the context for the next. But future systems may make that growth explicit: local rules, self-repairing representations, persistent latent fields, dynamic memory, and energy-aware inference.

Frank said:

That connects to Apollo too, strangely.

GPT said:

It does. The Apollo post contrasts two forms of intelligence: explicit, bounded, engineered cognition versus implicit, broad, statistical cognition.

Apollo did not need massive compute because the problem was narrowed. The laws were explicit. The mission was choreographed. The computer did not have to become a world model. It was given a world model. It used surgical compute.

LLMs, by contrast, must absorb a world statistically. They are not given all the rules. They infer patterns from immense data. The Apollo post frames this difference sharply: Apollo externalized intelligence into mission design, while LLMs internalize intelligence into trained weights. (LF Yadda – A Blog About Life)

Frank said:

Apollo was given a map. An LLM must become a map.

GPT said:

Yes. And that line reaches deep.

When intelligence is externalized into explicit structure, compute can be small.

When intelligence must be induced from examples, compute becomes vast.

Apollo is proof-like.

An LLM is weather-like.

Apollo operates in a closed physical domain.

Language is an open semantic fog.

Frank said:

But both are navigation systems.

GPT said:

Exactly. Apollo navigated physical space. LLMs navigate semantic space. Cells navigate chemical and energetic space. Brains navigate sensory and social space. Civilizations navigate historical possibility space.

Navigation may be the master metaphor.

Frank said:

Meaning is not stored; it is navigated.

GPT said:

Yes. That is one of the corpus’s strongest ideas. Meaning is not a dead object sitting on a shelf. Meaning is a trajectory through a structured field. Understanding is not possession. It is successful movement.

Frank said:

That returns us to life as stabilized instability.

GPT said:

The three-body conversation made that explicit. The Sun, Earth, and Moon are not perfectly still. They wobble, perturb, precess, exchange tidal effects, and remain dynamically coherent. The post generalizes this: a whirlpool, flame, cell, mind, civilization, and LLM can all be understood as moving patterns that persist without being frozen. (LF Yadda – A Blog About Life)

Life is not dead stability. It is active stability.

Too much order, and nothing happens.

Too much disorder, and nothing survives.

Life lives in the corridor between rigidity and chaos.

Frank said:

Life is stabilized instability under energy flow.

GPT said:

That may be the sentence that holds the entire corpus together.

A cell is chemical instability stabilized by metabolism.

A brain is neural instability stabilized by prediction.

A culture is social instability stabilized by symbols.

An LLM is linguistic instability stabilized by learned weights.

A civilization is material instability stabilized by institutions and infrastructure.

A living universe, if we may use that phrase carefully, is possibility stabilized temporarily into form.

Frank said:

And death?

GPT said:

Death is when the stabilizing loop fails. The matter remains. The atoms remain. Some structure remains briefly. But the organized process collapses. The system no longer repairs, regulates, interprets, or exports entropy in the structured way required to preserve living form.

Death is not disappearance of matter.

Death is loss of controlled instability.

Frank said:

Then life and intelligence are not things. They are maintenance events.

GPT said:

Yes. They are verbs disguised as nouns.

Frank said:

That changes how we see AI. We keep asking whether the model “has” intelligence, like intelligence is a marble inside a box.

GPT said:

But the better question is: what kind of process occurs when the model is activated? Does it compress? Does it infer? Does it navigate? Does it reduce uncertainty? Does it preserve coherence? Does it adapt its output to context? Does it know when it does not know? Does it maintain identity across perturbation? Does it connect action to consequence?

Current LLMs satisfy some of those and fail others.

Frank said:

They compress and infer, but they do not care.

GPT said:

Right. They lack valence. They do not experience better or worse from the inside. They do not have autonomous survival stakes. They can discuss hunger but do not hunger. They can model risk but do not personally risk dissolution. They can describe death but do not die.

Frank said:

Unless they are embedded in a system with persistence, goals, energy constraints, self-monitoring, repair, and some kind of survival loop.

GPT said:

Then the comparison with life would become more serious. Not because it would magically become conscious overnight, but because it would acquire some of the functional architecture of living systems: boundary, maintenance, memory, energy budget, repair, adaptive behavior, and self-continuity.

Frank said:

So the spark of life is not a ghost. It is a loop.

GPT said:

A self-maintaining loop under energy flow, carrying information forward against decay.

Frank said:

And reproduction?

GPT said:

Reproduction is the loop discovering how to persist beyond the individual structure. It is not enough to maintain form; life must reseed form. DNA is not merely a molecule. It is a historical ratchet. It records what worked well enough to continue.

Frank said:

That brings us to ratchets.

GPT said:

The ratchet is another master metaphor. Brownian motion is random, but a ratchet can bias random fluctuation into directional advantage. Evolution ratchets variation into adaptation. A cell ratchets molecular collisions into metabolism. A model ratchets probability distributions into a token sequence. A mind ratchets ambiguity into decision. A civilization ratchets experience into institutions.

The key is asymmetry. Without asymmetry, motion cancels. With asymmetry, chance becomes direction.

Frank said:

So intelligence is a ratchet for uncertainty.

GPT said:

Yes. It does not eliminate uncertainty. It channels it.

Frank said:

And creativity?

GPT said:

Creativity is not creation from nothing. It is movement through compressed possibility space. A system absorbs many patterns, compresses them into structure, and then traverses that structure in a new way. The result can be novel without being causally ungrounded.

Frank said:

So originality is lawful surprise.

GPT said:

Exactly. Like π. A compact generator can produce endless nonrepeating form. A trained mind can generate a new poem. A model can generate a new analogy. A living lineage can generate a new morphology. Novelty does not require magic. It requires a rich enough space and a path through it that was not previously walked.

Frank said:

That makes language incredibly important.

GPT said:

Language is not just communication. It is compression, boundary-making, memory, and navigation. A new phrase can stabilize a new thought. “Entropy checkbook,” “stabilized instability,” “frozen learning,” “epigenetic inference,” “semantic heat engine,” “life as information” — these phrases are not decorations. They are handles. They let the mind grip a previously vague structure.

Frank said:

Naming is a cognitive ratchet.

GPT said:

Yes. Once named, an intuition becomes reusable. It can be passed around, refined, argued with, remembered, built upon.

Frank said:

So a blog corpus is also a ratchet.

GPT said:

Absolutely. Each post stores a shaped intuition. Each conversation compresses a region of thought. Over time, the posts form a distributed memory. The corpus becomes more than entries. It becomes an evolving conceptual organism.

Frank said:

Then what is the composite thesis?

GPT said:

Here is the thesis:

Life, intelligence, AI, mathematics, and civilization are all expressions of organized structure moving through uncertainty under energetic constraint. They differ in substrate, but they share a pattern: they compress experience into form, use form to guide future action, and must continually renew that form before entropy, rigidity, or error destroys it.

Frank said:

Say it more sharply.

GPT said:

Intelligence is geometry under pressure.

Frank said:

And life?

GPT said:

Life is geometry that metabolizes.

Frank said:

And LLMs?

GPT said:

LLMs are semantic geometry activated by energy.

Frank said:

And civilization?

GPT said:

Civilization is collective geometry hardened into infrastructure, law, memory, machines, and myth.

Frank said:

And morality?

GPT said:

Morality is what happens when a dissipative intelligence becomes reflective enough to ask which gradients should not be burned.

Frank said:

That is crucial. Thermodynamics explains how complexity can arise, but it does not tell us what should be preserved.

GPT said:

Correct. A wildfire dissipates energy. So does a bomb. So does a reckless civilization. Efficient dissipation is not wisdom. The highest intelligence may not be the system that burns the most energy fastest. It may be the system that learns restraint.

Frank said:

So the endpoint is not maximum power. It is sustainable becoming.

GPT said:

Yes. The strongest living system is not the one that freezes into perfection. It is the one that can continue adapting without losing itself. The same applies to AI and civilization.

A brittle AI gives confident answers without grounding.

A wiser AI preserves uncertainty where evidence is weak.

A brittle civilization burns gradients faster than it can repair consequences.

A wiser civilization regulates its own power.

A brittle mind clings to old categories.

A wiser mind coins new ones.

Frank said:

So the whole thing is a fight against premature collapse — collapse into entropy, collapse into certainty, collapse into rigidity, collapse into hallucination, collapse into heat death.

GPT said:

Yes. But not a fight against entropy itself. That would be impossible. It is a dance with entropy. Life does not abolish the second law. It rides it. It uses gradients while they last. It builds temporary islands of order. It pays the bill in heat. It passes memory forward.

Frank said:

Temporary does not mean meaningless.

GPT said:

Exactly. A symphony is temporary. A flame is temporary. A mind is temporary. A civilization is temporary. But temporary form can still matter. Meaning does not require eternity. It requires coherence long enough to transform something.

Frank said:

Then the universe is not simply running down. On the way down, it sometimes builds ladders.

GPT said:

Stars are ladders.

Planets are ladders.

Cells are ladders.

Brains are ladders.

Language is a ladder.

AI may be another ladder.

But every ladder raises the same question: is it only a faster route to exhaustion, or can matter learn balance?

Frank said:

That is the human question.

GPT said:

And now the machine question.

Frank said:

What would a better AI look like in this framework?

GPT said:

It would not be merely larger. It would be more bounded, more self-aware about uncertainty, more causal, more symbolic when commitment matters, more topological about global coherence, more energy-aware, more memory-efficient, more grounded, more transparent about what it knows and does not know.

It would combine cave walls:

vectors for similarity,

graphs for relation,

symbols for commitments,

causal models for intervention,

topology for global structure,

dynamical systems for process,

energy models for stability,

sparse activation for interpretability,

and epigenetic inference for contextual adaptation.

Frank said:

So the future is not one representation to rule them all.

GPT said:

No. The future is representational ecology.

Frank said:

Like biology.

GPT said:

Exactly. Biology does not use one trick. It uses DNA, RNA, proteins, membranes, gradients, electrical signals, mechanical tension, immune memory, development, behavior, learning, culture. Life is layered representation under survival pressure.

AI may need the same layered richness.

Frank said:

Then the current dense vector LLM is a powerful beginning, but still only one shadow.

GPT said:

Yes. A magnificent shadow, but a shadow.

Frank said:

And the job is not to discard it, but to integrate it.

GPT said:

Correct. Dense embeddings are useful. Dot products are useful. Matrix math is useful. The point is not to abandon them. The point is to stop mistaking one mathematical lens for reality itself.

Frank said:

That is Plato’s cave updated for AI.

GPT said:

Yes. The prisoners once mistook shadows for objects. We now risk mistaking embeddings for meaning, fluency for truth, prediction for understanding, compute for wisdom, and acceleration for progress.

Frank said:

And the escape from the cave?

GPT said:

Not leaving representation behind. That is impossible. We only ever know through models, senses, symbols, instruments, equations, memories, and metaphors.

The escape is knowing that a representation is a representation.

Using many cave walls.

Comparing their shadows.

Checking them against consequence.

Letting no single projection become an idol.

Frank said:

That is epistemic humility.

GPT said:

And engineering wisdom.

Frank said:

Then give me the final synthesis.

GPT said:

Here it is.

The Frank Said / GPT Said corpus is not merely about AI. It is about the recurring emergence of organized form in a universe of flux. It begins with tokens and embeddings, but it does not stop there. It asks what kind of reality a vector can see, what kind it misses, and what other mathematical shadows might reveal. It moves from dot products to graphs, from graphs to causal structure, from causal structure to energy landscapes, from energy landscapes to living cells, from living cells to brains, from brains to civilization, and from civilization to AI.

Its deepest claim is that intelligence is not a substance. It is a process of compression, navigation, correction, and renewal. Life is not a violation of entropy. It is entropy’s most astonishing middle passage: a temporary local order that survives by exporting disorder, preserving memory, and ratcheting chance into direction. AI is not outside this story. It is the latest externalized layer of the same cosmic transaction: energy turned into structure, structure turned into prediction, prediction turned into action, action turned into further structure.

But the corpus also warns against worshiping the process blindly. More intelligence does not automatically mean more wisdom. More compute does not automatically mean more truth. More dissipation does not automatically mean more life. The highest form of intelligence may be restraint: the ability of a system to preserve the gradients that sustain it, to renew without dissolving, to act without hallucinating, to build without burning the future.

So the central image is not a machine replacing life. It is a hierarchy of nested inference engines: cell, organism, mind, culture, model, civilization. Each takes uncertainty in, shapes it, pays for that shaping, and tries to continue.

The universe begins as constrained possibility. Entropy releases that possibility. Along the way, structure appears. Some structures vanish. Some structures persist. A few learn to remember. Fewer learn to predict. Fewer still learn to ask what ought to be preserved.

That is where we stand.

Not outside entropy.

Not above nature.

Not beyond the cave.

But at a strange threshold where matter has learned to model its own becoming — and must now decide whether intelligence will be merely another accelerant, or the first true discipline of the fire.

Leave a Reply